Introduction

Africa stands at a pivotal inflection point in the history of biotechnology governance. For three decades, the continent has wrestled with the challenge of regulating genetically modified organisms (GMOs) under frameworks inherited from the Cartagena Protocol on Biosafety — a landmark international treaty that, while groundbreaking in 2000, was designed for a world that could not yet imagine the convergence of artificial intelligence and synthetic biology. Today, that convergence is no longer hypothetical. Generative AI models trained on vast repositories of digital DNA sequences are redesigning proteins, engineering novel organisms, and accelerating biotechnology at a pace that outstrips the capacity of any existing regulatory architecture — including Africa's.

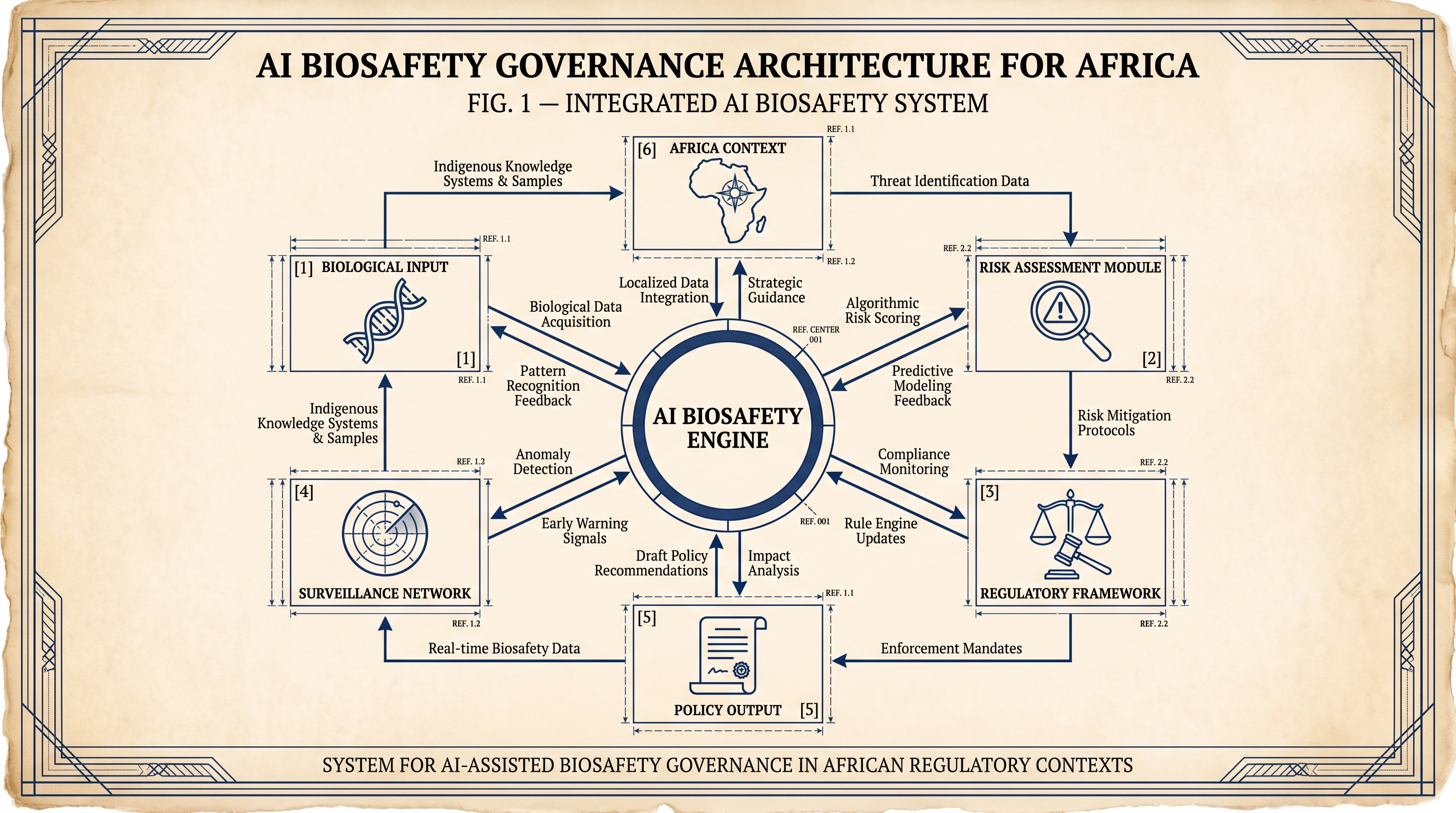

The question confronting African biosafety authorities, policymakers, and scientists is not whether AI will transform biosafety governance, but whether Africa will shape that transformation or merely absorb it. This blog post examines the current state of AI integration in African biosafety governance, the structural gaps that make the continent particularly vulnerable to AI-related biosafety failures, and the concrete policy pathways that could position Africa as a sovereign actor — rather than a passive recipient — in the emerging global AI biosafety order.

The Convergence of AI and Biosafety: What Has Changed

Traditional biosafety governance rests on a well-established logic: a developer submits a dossier describing a genetically modified organism, a national biosafety committee evaluates the evidence against established risk criteria, and a decision is issued. This process, while imperfect, is fundamentally legible. Regulators can trace the genetic modification, understand its mechanism, and apply precautionary principles grounded in scientific consensus.

Generative AI disrupts this legibility at its foundation. Models such as ESMFold, AlphaFold 3, and the emerging class of "biodesign" tools developed by firms including Ginkgo Bioworks and Zymergen can generate novel protein sequences and gene constructs that have no natural precedent and no established risk profile. The African Centre for Biodiversity, together with Third World Network and the ETC Group, described this phenomenon in their 2024 briefing as "black box biotechnology" — a class of AI-generated biological designs whose internal logic is opaque even to their creators. When the organism under review was designed by an algorithm rather than a human scientist, the conventional dossier-based review process faces a fundamental epistemological challenge: there is no human-interpretable design rationale to evaluate.

This challenge is not merely theoretical. A 2024 biosecurity risk assessment published in Applied Biosafety found that AI-assisted synthetic biology introduces novel dual-use risks that existing frameworks are structurally unprepared to address, including the potential for AI tools to lower the technical barrier for the design of dangerous pathogens. For Africa — a continent that hosts several of the world's most significant zoonotic disease reservoirs, including the Democratic Republic of Congo's Ebola-endemic zones and East Africa's Rift Valley Fever corridors — the biosecurity implications of ungoverned AI-assisted biotechnology are acute.

Africa's Regulatory Landscape: Strengths and Structural Gaps

Africa's biosafety governance architecture is more developed than is commonly acknowledged. Fifty-four of the continent's fifty-five states are parties to the Convention on Biological Diversity, and forty-seven have ratified the Cartagena Protocol on Biosafety. The African Union's Model Law on Safety in Biotechnology, adopted in 2001 and revised in 2019, provides a harmonised template that several member states have incorporated into national legislation. Regional bodies including COMESA and SADC have developed biosafety protocols that facilitate cross-border risk assessment coordination.

Despite this architecture, three structural gaps render Africa's biosafety governance system poorly equipped for the AI era.

First, the data sovereignty deficit. AI-based risk assessment tools are only as reliable as the training data on which they are built. The dominant AI models in biotechnology are trained predominantly on data from North American and European research institutions. African biodiversity, African pathogen genomics, and African agricultural ecosystems are systematically underrepresented in these datasets. A 2025 analysis published in Epidemics found that AI health security tools trained on non-African data consistently underperform in African settings, with particular failures in pathogen surveillance and outbreak prediction. When African biosafety authorities use these tools to assess AI-generated organisms, they are applying models calibrated to ecological and epidemiological contexts that may bear little resemblance to their own.

Second, the capacity asymmetry. A 2025 Brookings Institution brief documented that African voices are "significantly excluded" from global AI safety discussions, and that the continent lacks the sovereign expert capacity — AI researchers, red-teaming infrastructure, evaluation platforms — needed to independently assess the risks of advanced AI systems. In the biosafety context, this asymmetry is compounded by the fact that the firms developing AI biodesign tools are predominantly headquartered in the United States, the United Kingdom, and China, and are not subject to African regulatory jurisdiction during the design phase.

Third, the regulatory fragmentation problem. While the AU Model Law provides a harmonised template, national implementation varies enormously. Kenya, Nigeria, South Africa, and Uganda have relatively developed biosafety regulatory systems. Many other African states lack functional national biosafety committees, trained risk assessors, or the laboratory infrastructure needed to conduct independent verification of biosafety dossiers.

The Addis Ababa Declaration: A Continent Speaks

In October 2025, a landmark civil society gathering in Addis Ababa, Ethiopia, produced the Declaration on Biosafety Concerns Regarding Artificial Intelligence and Genetic Engineering in Agriculture and Food Systems — the most explicit statement yet of African civil society's position on AI in biosafety governance. The Declaration, agreed by government officials, academics, and farmers' organisations from across the continent, called for the protection of Africa's centres of crop origin and diversity — including teff, enset, coffee, sorghum, and Ethiopian mustard — from AI-assisted genetic engineering.

The Declaration's significance extends beyond its specific demands. It represents the first time that African stakeholders have collectively articulated a biosafety governance position that explicitly names AI as a distinct regulatory challenge, rather than treating it as a subset of conventional GMO governance. In doing so, it signals that African civil society is ahead of African regulatory institutions in recognising the governance gap — and that pressure for institutional reform is building from below.

AI as a Tool for Biosafety Governance: The Constructive Case

It would be a mistake to frame AI solely as a threat to African biosafety governance. Deployed appropriately, AI offers transformative tools for strengthening the very governance systems that are currently under strain.

Pathogen surveillance and early warning. Machine learning models trained on genomic, epidemiological, and environmental data can detect outbreak signals weeks before conventional surveillance systems. The Africa CDC's Integrated Disease Surveillance and Response framework has begun piloting AI-assisted early warning tools, and the 2024 Health Security Partnership for Africa workshop in Addis Ababa identified AI-enabled surveillance as a priority investment for achieving the "100 Days Mission."

Risk assessment automation. AI tools can accelerate the processing of biosafety dossiers, flagging potential hazards, identifying gaps in submitted evidence, and cross-referencing proposed organisms against databases of known pathogens and allergens. For under-resourced national biosafety committees, this automation could meaningfully reduce the time and expertise required for routine assessments.

Biosecurity screening. Large language models and sequence analysis tools can screen scientific literature, patent filings, and research grant databases for dual-use research of concern. Applied to the African research ecosystem, such tools could help identify emerging biosecurity risks before they materialise, supporting the kind of anticipatory governance that is essential for African policymakers.

A Policy Roadmap for African AI Biosafety Governance

Drawing on the research reviewed above and the author's experience in biosafety policy across East Africa, the following five-point roadmap offers a practical framework for African governments and regional bodies.

1. Mandate AI-specific annexes in biosafety dossiers. The AU Model Law should be amended to require that any biosafety application involving an AI-designed or AI-optimised organism include a dedicated annex describing the AI system used, the training data on which it was built, the validation process applied, and any known limitations or failure modes.

2. Establish the Africa Biosafety AI Observatory. Building on the proposed Africa AI Council, African governments should establish a dedicated observatory to monitor AI developments in biotechnology, maintain a registry of AI biodesign tools used in Africa, and publish annual risk assessments of emerging AI-biosafety intersections.

3. Invest in African AI training datasets for biosafety. The African Union's Data Policy Framework should be extended to include a specific mandate for the development of African biosafety and biodiversity AI training datasets, involving digitising existing African biosafety dossiers, genomic databases, and ecological monitoring records.

4. Build regional AI biosafety capacity through the Cartagena Protocol mechanism. African parties should advocate for the addition of an AI-specific module to the Biosafety Clearing-House, enabling African regulators to share assessments of AI-generated organisms and access peer-reviewed guidance on AI biosafety evaluation methodologies.

5. Engage the CBD process on generative biology. African parties — which collectively constitute the largest bloc within the CBD — should use their numerical strength to advocate for a formal work programme on AI and biosafety at COP17, building on the momentum of the Addis Ababa Declaration.

Conclusion: Sovereignty as the Organising Principle

The history of biotechnology governance in Africa is, in part, a history of frameworks designed elsewhere being applied to African contexts with imperfect results. The Cartagena Protocol was negotiated in Montreal. The risk assessment methodologies used by African biosafety committees were developed in European and North American laboratories. The AI tools now being deployed in African biosafety applications were trained on data from the Global North.

This pattern need not continue. Africa possesses the scientific talent, the institutional architecture, and — through the CBD and the AU — the multilateral leverage to shape the governance of AI in biosafety on its own terms. The Addis Ababa Declaration demonstrates that African civil society is ready to lead. The question is whether African governments and regional bodies will follow.

The stakes could not be higher. AI-assisted biotechnology is advancing at a pace that will not wait for governance to catch up. Every year that African biosafety authorities spend applying 20th-century frameworks to 21st-century technologies is a year in which AI-generated organisms enter African ecosystems without adequate oversight. The time for anticipatory governance is now.

Frequently Asked Questions

What is the Cartagena Protocol and why does it matter for AI biosafety in Africa? The Cartagena Protocol on Biosafety is an international treaty under the Convention on Biological Diversity that regulates the transboundary movement of living modified organisms (LMOs). It matters for AI biosafety because it is the primary international framework governing GMOs in Africa, but it was designed before AI-generated organisms existed and contains no provisions specifically addressing AI-assisted biotechnology.

How does AI change the risk profile of genetically modified organisms? AI biodesign tools can generate novel protein sequences and gene constructs with no natural precedent, making conventional risk assessment — which relies on comparison with known organisms — less reliable. The opacity of AI-generated designs also makes it harder for regulators to understand the mechanism of action of a proposed organism, complicating the application of precautionary principles.

Which African countries have the most developed biosafety regulatory systems? Kenya, Nigeria, South Africa, and Uganda have the most developed national biosafety regulatory frameworks on the continent, with functional national biosafety committees, trained risk assessors, and laboratory infrastructure for independent verification.

What is the Addis Ababa Declaration on Biosafety and AI? The Addis Ababa Declaration, agreed in October 2025, is a civil society statement calling for the protection of African centres of crop diversity from AI-assisted genetic engineering and for democratic, community-driven governance of biotechnology in Africa.

What is the "100 Days Mission" and how does AI support it? The 100 Days Mission is a global health security target to develop and deploy a safe and effective vaccine within 100 days of a pandemic threat being identified. AI accelerates several steps in this process, including pathogen characterisation, vaccine candidate design, and clinical trial optimisation.

References

- African Centre for Biodiversity, Third World Network, and ETC Group. Black Box Biotechnology: Integration of Artificial Intelligence with Synthetic Biology. Briefing paper for CBD COP16, September 2024.

- De Haro, L.P., et al. "Biosecurity Risk Assessment for the Use of Artificial Intelligence in Synthetic Biology." Applied Biosafety, 2024. https://journals.sagepub.com/doi/10.1089/apb.2023.0031

- Standley, C.J., et al. "Artificial intelligence for health security in Africa: Benefits, risks and opportunities." Epidemics, Volume 53, December 2025. https://doi.org/10.1016/j.epidem.2025.100870

- Wiaterek, J., Abungu, C., and Okolo, C.T. "Building regional capacity for AI safety and security in Africa." Brookings Institution, May 2025.

- African Centre for Biodiversity and MELCA Ethiopia. Addis Ababa Declaration on Biosafety Concerns Regarding Artificial Intelligence and Genetic Engineering in Agriculture and Food Systems. November 2025.