The Inconsistency Crisis in DURC Review

A landmark editorial published in Frontiers in Bioengineering and Biotechnology in May 2026 has exposed a troubling reality at the heart of global biosecurity governance: expert reviewers assessing the same dual-use research of concern (DURC) project can disagree by up to four levels on a five-point risk scale. In a study of 18 expert reviewers and 49 synthetic biology students evaluating four real-world projects using the US government's DURC framework, the variation in perceived risk was so wide that two experts looking at the same H5N1 transmission study could reach conclusions ranging from "low concern" to "critical risk."

This is not a minor calibration problem. DURC — research that, while conducted for legitimate scientific purposes, could be misused to pose a biological threat — is one of the most consequential categories of scientific oversight. The 15 categories defined by US DURC policy include research that enhances pathogen transmissibility, virulence, drug resistance, immune evasion, or the ability to evade detection. When expert reviewers disagree by four levels on whether a study falls into these categories, the entire oversight framework is undermined.

What BioScreens DURC Analysis Offers

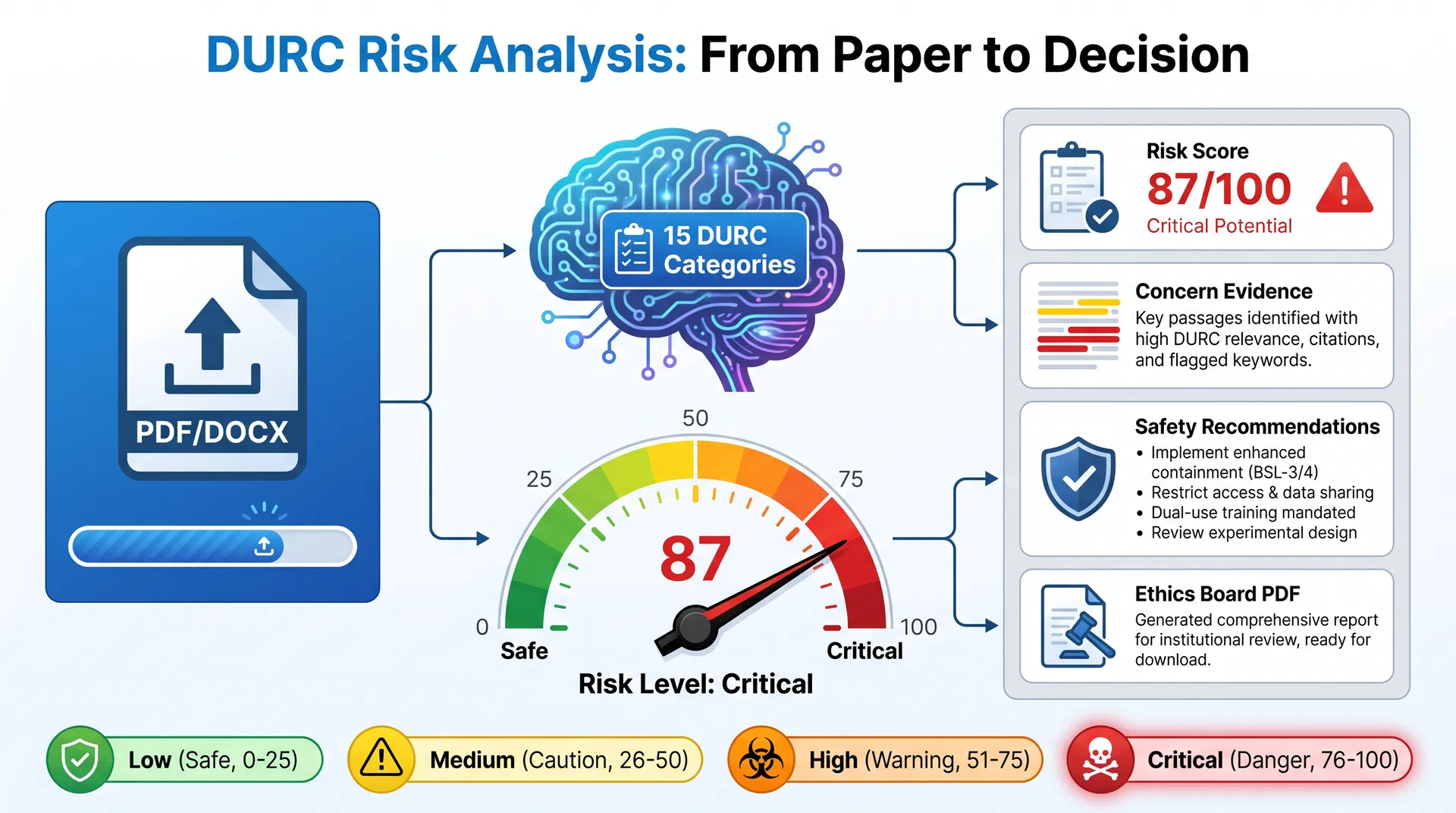

The BioScreens platform at bioscreens.org has built an AI-powered DURC analysis tool designed precisely to address this inconsistency. Researchers, ethics boards, and institutional review bodies can upload a PDF, DOCX, or paste research text directly into the platform. The AI engine evaluates the submission against all 15 US DURC policy categories and returns a quantified risk score from 0 to 100, classified as Low (0–25), Medium (26–50), High (51–75), or Critical (76–100).

The platform's example of an H5N1 Transmission Study scoring 87/100 — Critical Risk — with specific concerns flagged for enhanced transmissibility via gain-of-function, airborne transmission potential, and vaccine resistance implications, illustrates the level of granularity that AI analysis can provide. Rather than a subjective expert judgment, the score is derived from evidence-backed concern identification: specific passages in the research document that triggered each DURC category match.

| DURC Risk Level | Score Range | Example Concern | BioScreens Recommendation |

|---|---|---|---|

| Low | 0–25 | Minor methodological overlap | Standard institutional review |

| Medium | 26–50 | Potential pathogen enhancement | Enhanced oversight, smart contract generated |

| High | 51–75 | Demonstrated virulence increase | BSL-3 containment, access restriction |

| Critical | 76–100 | Pandemic potential identified | BSL-4, regulatory disclosure, publication redaction |

The platform supports multi-language OCR, processing documents in English, French, German, and Arabic — a critical capability given that DURC is a global concern, not a US-only regulatory matter. The output includes a formatted PDF report ready for submission to an institutional ethics board, with risk score, concern evidence, and prioritised safety recommendations.

Infographic: BioScreens DURC Analysis workflow — from document upload to ethics board PDF report.

The Global Governance Gap

The Frontiers editorial is not the only recent signal that DURC governance is under strain. In April 2026, Australia announced a new security unit specifically tasked with tackling AI-enabled bioweapons — a direct response to the convergence of large language models and synthetic biology capabilities. The unit's mandate includes monitoring advances in DNA synthesis technologies and associated screening practices.

Malaysia's April 2026 WHO-sponsored Responsible Use of Life Sciences workshop, held under the Western Pacific Regional Office, brought together government officials, biosafety experts, and researchers to strengthen dual-use research governance frameworks. The workshop's focus on preventing, detecting, and responding to biological threats — whether accidental or deliberate — reflects a growing recognition that DURC oversight cannot be left to individual institutional review boards operating with inconsistent standards.

The Frontiers editorial also highlighted the proposed creation of a US National Biosafety and Biosecurity Agency (NBBA) that would unify oversight of DURC, enhanced potential pandemic pathogens (ePPPs), nucleic acid synthesis screening, pathogen management, and laboratory animal regulations under a single federal body. The proposal reflects frustration with the current fragmented system, in which DURC oversight is distributed across the National Institutes of Health, the Centers for Disease Control, and individual institutional biosafety committees with varying levels of expertise and resources.

The Smart Contract Innovation

One of BioScreens' most distinctive features is its Smart Contract Insurance System, which activates automatically for any DURC analysis that returns a Medium risk score or above. When a researcher's submission crosses the Medium threshold, the platform generates a binding smart contract, calculates a risk-adjusted insurance premium, and opens an escrow account — creating an immutable, hash-chained audit ledger of the compliance event.

The penalty framework is tiered: Tier 1 violations (minor procedural non-compliance) result in a $500 penalty and a formal written warning; Tier 4 violations (critical breaches with potential public safety implications) result in a $50,000 penalty, permanent platform exclusion, and mandatory regulatory disclosure. Coverage amounts scale from $50,000 for Medium-risk research to $2,000,000 for Critical-risk research.

This automated compliance enforcement mechanism addresses a longstanding weakness in DURC governance: the gap between risk identification and accountability. A researcher who receives a Critical risk score on a DURC analysis and proceeds without institutional review is now creating a documented, financially consequential record of non-compliance — not merely a paper trail that may or may not be reviewed.

The Path to Standardisation

The Frontiers editorial's call for "standardized methods to improve agreement, accuracy, and cost-effectiveness" in DURC review is, in effect, a call for tools like BioScreens. The platform's AI engine does not replace human expert judgment — it provides a consistent, evidence-backed baseline that human reviewers can interrogate, challenge, and supplement. An expert who disagrees with a BioScreens risk score of 72/100 must engage with the specific passages and DURC categories that generated that score, rather than simply offering a different subjective assessment.

For institutions seeking to strengthen their DURC review processes, BioScreens offers free access to its DURC Analysis tool at bioscreens.org. The platform's multi-language support, ethics board PDF output, and smart contract compliance system make it a practical starting point for any institution that takes its biosecurity obligations seriously.

The inconsistency crisis in DURC review is not a failure of expert knowledge. It is a failure of standardisation. AI-powered analysis tools are the most promising path to closing that gap before the next generation of gain-of-function research makes the stakes even higher.