The AI Search Blueprint for Scientists: How SEO, AEO, GEO, and LLMO Are Reshaping Science Communication

Slug: ai-seo-aeo-geo-llmo-science-communication-blueprint Excerpt: As AI-powered search engines replace traditional Google queries for millions of users, scientists and researchers face a new visibility challenge. This post applies the AI Search Blueprint framework — SEO, AEO, GEO, and LLMO — to science communication, showing how researchers can ensure their work is discovered, cited, and trusted by both human audiences and AI systems. Tags: Science Communication, SEO, AEO, LLMO, GEO, AI Search, Research Visibility, Biosafety, Open Science Category: Science Communication & AI Read Time: 10 min Author: Dr. Odongo Oduor Joseph

Introduction: When AI Becomes the Gatekeeper of Scientific Knowledge

A fundamental shift is underway in how people access scientific information. For decades, the dominant pathway was linear: a researcher published in a peer-reviewed journal, the paper was indexed in PubMed or Google Scholar, a journalist or science communicator found it via a keyword search, and a public audience eventually encountered a simplified version through a news article or blog post. Each step in this chain was mediated by human judgment — editors, search algorithms, communicators, and readers all applied their own filters.

That chain is breaking. Today, a growing proportion of science-related queries are answered not by a list of blue links but by a generative AI system — ChatGPT, Google's AI Overviews, Perplexity, Microsoft Copilot, or Claude — that synthesises information from multiple sources and delivers a direct, conversational answer. According to recent analyses of search behaviour, AI-generated answers now intercept a significant fraction of informational queries that would previously have driven traffic to scientific websites and blogs. In some sectors, organic click-through rates have declined by more than 37% as AI summaries satisfy user intent without requiring a visit to the source.

For scientists, biosafety researchers, and science communicators, this transformation creates both a challenge and an opportunity. The challenge is visibility: if your research, your institution, or your expertise is not represented in the training data, knowledge graphs, and citation patterns that AI systems draw upon, you effectively cease to exist for a large and growing segment of your potential audience. The opportunity is authority: researchers who understand how AI systems evaluate, rank, and cite scientific content can position their work to become the definitive source that AI systems consistently recommend.

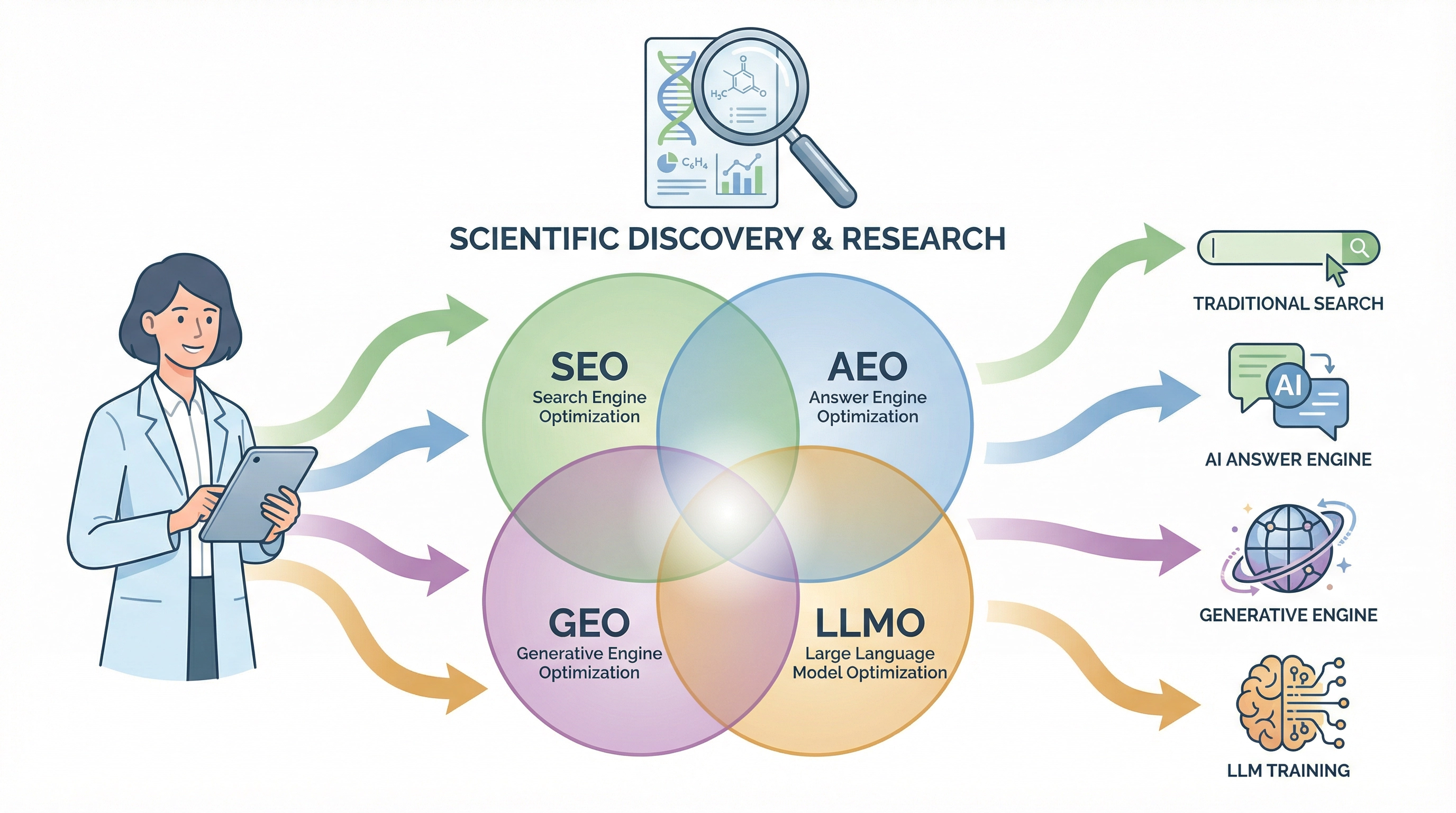

This post applies the AI Search Blueprint framework — a structured approach to optimising content for SEO, AEO (Answer Engine Optimisation), GEO (Generative Engine Optimisation), and LLMO (Large Language Model Optimisation) — to the specific context of science communication.

Understanding the Four Disciplines

The AI Search Blueprint distils a complex and often jargon-heavy landscape into four complementary disciplines. It is important to note at the outset that these are not separate strategies requiring separate workflows — they are overlapping lenses on the same underlying goal: creating content that is accurate, structured, authoritative, and machine-readable.

| Discipline | Core Focus | Primary Execution |

|---|---|---|

| SEO (Search Engine Optimisation) | Visibility in traditional search | Keywords, internal links, technical hygiene |

| AEO (Answer Engine Optimisation) | Direct answers in AI responses | FAQ structures, concise definitions, schema markup |

| GEO (Generative Engine Optimisation) | Representation in AI-generated comparisons | Structured comparisons, "versus" content, listicles |

| LLMO (Large Language Model Optimisation) | Topical authority in LLM training data | Brand ubiquity, off-site mentions, deep expertise signals |

As the AI Search Blueprint frames it: "Stop worrying about the acronyms. Start building the best answers on the internet." For scientists, this translates to a deceptively simple imperative: write content that is so accurate, so well-structured, and so clearly attributed to a credible expert that AI systems have no choice but to cite it.

The Science Communication Visibility Crisis

The intersection of AI search and science communication creates a specific set of problems that are worth naming precisely.

The Misinformation Amplification Problem. AI systems are trained on the internet as it exists, not as scientists wish it existed. For topics like GMO safety, vaccine efficacy, or climate change, the volume of misinformation online can dwarf the volume of peer-reviewed scientific content — particularly in formats that AI systems find easy to parse (short, direct, structured, conversational). If scientists do not produce content in AI-readable formats, the AI systems that millions of people consult for scientific information will disproportionately draw on non-scientific sources.

The Citation Invisibility Problem. A landmark paper published in Nature Biotechnology is not automatically more visible to an AI system than a well-structured blog post on the same topic. AI systems weight content based on structural signals — schema markup, FAQ formatting, heading hierarchy, internal linking, and off-site brand mentions — not solely on journal impact factor. A researcher who publishes exclusively in paywalled journals and maintains no public-facing digital presence is, from the perspective of most AI systems, nearly invisible.

The Authority Dilution Problem. In the AI search era, authority is not conferred by institutional affiliation alone. It is built through what the AI Search Blueprint calls "brand ubiquity" — the degree to which an expert's name, institution, and ideas appear consistently across multiple independent sources. A biosafety researcher whose work is discussed in policy documents, cited in open-access reviews, mentioned in podcast transcripts, and referenced in Wikipedia articles will be treated by AI systems as a more authoritative source than an equally qualified researcher with an identical publication record but no public digital footprint.

Applying the Blueprint: A Framework for Scientists

1. Deep Intent: Targeting the Questions Your Audience Is Actually Asking

The AI Search Blueprint introduces the concept of "money keywords" — high-intent, specific queries where the user is ready to act on information. For scientists and science communicators, the equivalent concept is decision-point queries: the specific questions that policymakers, journalists, students, and concerned citizens ask at the moment they need to make a decision or form an opinion.

For a biosafety researcher, decision-point queries might include: "Is Bt maize safe for human consumption?", "What are the risks of gain-of-function research?", "How does CRISPR gene editing work in crops?", or "What is the difference between a GMO and a gene-edited organism?" These are not the questions that appear in the titles of peer-reviewed papers. They are the questions that appear in the search bars of non-specialist audiences — and they are precisely the questions that AI systems are now answering, with or without input from the scientific community.

The practical implication is straightforward: science communicators should build a matrix of decision-point queries relevant to their expertise and create dedicated, structured content that answers each query directly, accurately, and in language accessible to a non-specialist audience.

2. Unambiguous Structure: Writing for the Machine Reader

The AI Search Blueprint's second pillar — "Unambiguous Structure" — is perhaps the most immediately actionable for scientists. LLMs do not read content the way humans do. They parse HTML, identify heading hierarchies, extract text from structured lists and tables, and prioritise content that answers a query in the first sentence of a paragraph. Several structural principles apply directly to science communication:

Use a clear H1 and logical H2 hierarchy. A blog post or science explainer should have one unambiguous title (H1) and a set of subheadings (H2s) that map directly to the sub-questions a reader might have. AI systems use this hierarchy to understand the scope and structure of the content.

Answer the question first, then elaborate. The "inverted pyramid" structure familiar to journalists is also optimal for AI readability. State the direct answer to the question in the first sentence, then provide the supporting evidence, context, and nuance in subsequent sentences. This structure makes it easy for an AI system to extract a direct answer for a user query.

Use tables for comparisons. When comparing regulatory frameworks, risk categories, or scientific methodologies, structured HTML tables are among the most AI-readable formats available. A table comparing the DURC criteria under the 2012 U.S. DURC Policy and the 2024 DURC-PEPP Policy, for example, will be parsed and cited by AI systems far more readily than the same information presented in discursive prose.

Implement FAQ schema markup. The FAQ section at the end of a science blog post is not merely a reader convenience — it is a direct signal to AI systems that the content is designed to answer specific questions. Implementing FAQ schema markup in the underlying HTML ensures that these question-answer pairs are indexed and available for AI citation.

3. Ubiquitous Authority: Building the Off-Site Footprint

The third pillar of the AI Search Blueprint — "Ubiquitous Authority" — requires the most sustained effort but yields the most durable results. The core insight is that AI systems do not evaluate authority in isolation; they evaluate it relationally. When an LLM cross-references ten different sources on a topic and sees the same researcher's name cited in eight of them, that researcher algorithmically becomes the definitive authority on the topic.

For scientists, building this off-site footprint requires a deliberate multi-channel strategy:

Wikipedia and Wikidata. Wikipedia is among the most heavily weighted sources in LLM training data. Ensuring that your research area, your institution, and your key findings are accurately represented in relevant Wikipedia articles — and that your publications are cited as references — is one of the highest-return investments a science communicator can make.

Open-access publications and preprints. Paywalled journal articles are largely invisible to AI systems. Open-access publications, preprints on bioRxiv or medRxiv, and institutional repository deposits ensure that the full text of your research is available for AI indexing. The AI Search Blueprint's principle of "exposing the raw HTML" applies directly: content that is locked behind a paywall or rendered in PDF format is significantly less accessible to AI systems than content published in open HTML formats.

Podcast appearances and YouTube transcripts. The AI Search Blueprint identifies podcasts and YouTube transcripts as among the most heavily weighted off-site authority signals for AI systems. A scientist who appears on a science podcast discussing their research generates a transcript that AI systems can parse, index, and cite. This is a particularly underutilised channel for researchers in the life sciences and biosafety fields.

Third-party listicles and comparison articles. AI systems disproportionately draw on structured listicle articles — "Top 10 biosafety researchers to follow", "Best resources for understanding CRISPR regulation", "Leading voices in GMO science communication" — when formulating answers to broad authority queries. Contributing to or being featured in such articles is a high-leverage strategy for building AI-visible authority.

The 90-Day Science Communication Blueprint

Drawing directly from the AI Search Blueprint's execution framework, the following 90-day plan is adapted for scientists and science communicators:

Days 1–30 (Map): Identify the ten most critical decision-point queries in your research area. Search each query in ChatGPT, Perplexity, and Google AI Overviews. Document which sources the AI systems are currently citing. Identify the gaps — queries where the AI response is inaccurate, incomplete, or draws on non-scientific sources.

Days 31–60 (Build): Create dedicated, structured content pages for each of the ten queries. Each page should include a direct answer in the first paragraph, a structured FAQ section with schema markup, at least one comparison table, and links to open-access primary sources. Publish these on your institutional website, personal research blog, or a platform like Medium or Substack that AI systems index readily.

Days 61–90 (Hustle): Identify the podcasts, YouTube channels, and third-party review articles that AI systems are currently citing for your target queries. Reach out to the creators with a specific, value-oriented pitch — offer to contribute a guest post, appear as a podcast guest, or provide expert commentary for a review article. Secure three to five off-site mentions that link back to your structured content.

Implications for Biosafety and GMO Science Communication

The AI search transformation has particularly high stakes for biosafety and GMO science communication. These are fields where the gap between scientific consensus and public perception is large, where misinformation is abundant and well-structured, and where the consequences of public misunderstanding — regulatory failure, policy paralysis, erosion of trust in science — are severe.

The AI Search Blueprint framework offers biosafety researchers a concrete pathway to close this gap. By producing structured, FAQ-rich, open-access content that directly addresses the decision-point queries of policymakers, journalists, and concerned citizens — and by building the off-site authority footprint that causes AI systems to treat biosafety researchers as the definitive source on these questions — the scientific community can reclaim the AI-mediated information environment from the misinformation ecosystem that currently dominates it.

Key Takeaways

The AI search revolution is not a threat to science communication — it is an opportunity to build a more direct, more authoritative, and more durable connection between scientific expertise and public understanding. The AI Search Blueprint's four disciplines — SEO, AEO, GEO, and LLMO — are not marketing tools borrowed from the commercial world; they are the new grammar of scientific visibility in an AI-mediated information environment. Scientists who master this grammar will find their work cited, trusted, and acted upon by audiences they could never reach through traditional publication channels alone.

Frequently Asked Questions

What is LLMO in science communication? LLMO (Large Language Model Optimisation) refers to strategies that make scientific content more likely to be cited, summarised, and recommended by AI systems like ChatGPT, Perplexity, and Google AI Overviews. It involves building topical authority through structured content, open-access publication, and off-site brand mentions.

How is AEO different from SEO for scientists? SEO (Search Engine Optimisation) focuses on ranking in traditional search results. AEO (Answer Engine Optimisation) focuses on having your content selected as the direct answer in AI-generated responses. AEO requires FAQ structures, schema markup, and direct, concise answers to specific questions.

Why is open-access publication important for AI visibility? AI systems cannot index paywalled content. Open-access publications, preprints, and institutional repository deposits ensure that the full text of your research is available for AI training and citation. Paywalled articles are largely invisible to the AI systems that millions of people now use for scientific information.

What is GEO and how does it apply to biosafety research? GEO (Generative Engine Optimisation) involves creating structured comparison content — "versus" articles, listicles, and comparison tables — that AI systems draw upon when answering comparative questions. For biosafety researchers, this might include structured comparisons of regulatory frameworks, risk assessment methodologies, or GMO approval processes across different jurisdictions.

How can scientists build AI-visible authority? Scientists can build AI-visible authority by ensuring their work is represented in Wikipedia, publishing in open-access formats, appearing on podcasts and YouTube channels whose transcripts are indexed by AI systems, and being featured in third-party review articles and listicles that AI systems cite for relevant queries.

What is the most important first step for a scientist new to AI search optimisation? The most important first step is to search your key research topics in ChatGPT and Perplexity and document what sources the AI systems are currently citing. This reveals the gaps where scientific content is absent or underrepresented, and identifies the highest-priority content creation opportunities.