Introduction: The Invisible Threat Hidden in Plain Text

Every year, tens of thousands of life sciences research papers are published across preprint servers, peer-reviewed journals, and institutional repositories. The vast majority of this literature advances human welfare — developing vaccines, improving diagnostics, and deepening our understanding of microbial ecology. Yet embedded within this torrent of scientific output lies a small but critically important subset of publications that describe research with dual-use potential: findings that, in the wrong hands, could be weaponised, misappropriated, or used to cause deliberate harm.

Detecting these papers manually is a task that exceeds the capacity of any biosecurity review board. The sheer volume of publications, the technical complexity of the language, and the often subtle nature of dual-use risk make human-only screening both slow and inconsistent. This is precisely the gap that the Biosecurity Risk Detection Algorithm GPT was designed to fill.

Accessible directly at https://chatgpt.com/g/g-darmv2aII-biosecurity-risk-detection-algorithm-gpt, this specialised AI tool applies advanced text mining, Natural Language Processing (NLP), and machine learning to identify mentions of harmful biological agents, dual-use research of concern (DURC), and other biosecurity risks embedded within scientific literature. It represents a significant step forward in the automation of biosecurity governance — and a powerful resource for researchers, biosafety officers, journal editors, and policy analysts alike.

_The Problem: Why Manual Screening Is No Longer Sufficient

The challenge of dual-use research oversight is not new. Since the 2001 anthrax letter attacks and the subsequent publication of the mousepox IL-4 experiment, the scientific community has grappled with how to balance open science with biosecurity responsibility. The Fink Report (2004) and the subsequent establishment of the National Science Advisory Board for Biosecurity (NSABB) in the United States formalised the concept of DURC — research that, while conducted for legitimate purposes, could be misused to pose a biological threat to public health, agriculture, or national security.

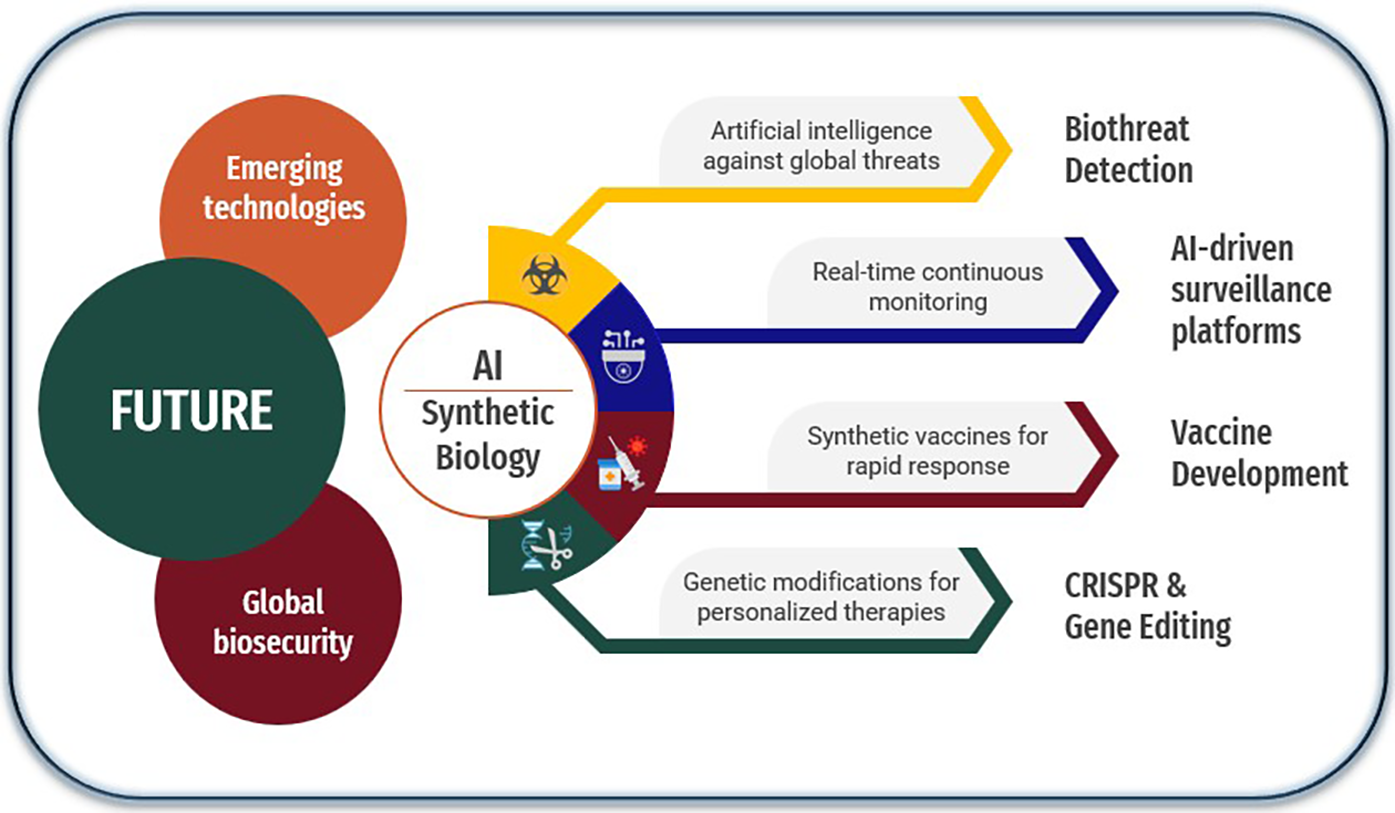

The problem has intensified dramatically in the intervening two decades. The democratisation of synthetic biology tools, the proliferation of CRISPR-based gene editing platforms, and the global expansion of life sciences research capacity have collectively expanded both the volume of potentially sensitive research and the geographic distribution of laboratories capable of conducting it. Meanwhile, the rise of preprint servers such as bioRxiv and medRxiv has created a parallel publication track that largely bypasses traditional peer review — and with it, the informal biosecurity gatekeeping that journal editors sometimes provide.

Against this backdrop, the limitations of manual screening are stark. A biosafety officer reviewing a stack of grant applications or a journal editor evaluating a submitted manuscript can only apply their expertise to the documents in front of them. They cannot systematically scan the entire corpus of published literature for emerging risk patterns, cross-reference agent mentions against select agent lists, or flag subtle combinations of methodologies that, taken together, might constitute a biosecurity concern even if no single element does.

The Solution: NLP and Machine Learning as Biosecurity Infrastructure

The Biosecurity Risk Detection Algorithm GPT addresses these limitations by applying the same class of language models that power modern search engines and document summarisation tools to the specific domain of biosecurity risk assessment. The tool is built on a foundation of three interlocking capabilities.

Text Mining for Agent Identification. At its most basic level, the tool performs sophisticated named entity recognition (NER) to identify mentions of biological agents — pathogens, toxins, select agents, and potential biological weapons precursors — within research text. Unlike simple keyword matching, NER-based approaches can distinguish between a paper that mentions Yersinia pestis in a historical epidemiological context and one that describes novel gain-of-function modifications to the same organism. This contextual sensitivity is essential for reducing false positives while maintaining high recall of genuine risks.

Dual-Use Research Classification. Beyond agent identification, the tool applies machine learning classifiers trained to recognise the linguistic and methodological signatures of DURC. These include descriptions of enhanced transmissibility or pathogenicity, synthesis routes for dangerous compounds, methods for acquiring or concentrating select agents, and techniques for defeating host immune responses or medical countermeasures.

Contextual Risk Scoring. Perhaps most importantly, the tool does not simply flag individual terms or sentences in isolation. It analyses the broader context of a research paper — its stated objectives, methodology, results, and discussion — to generate a holistic risk assessment. A paper describing the aerosol transmission characteristics of a Select Agent pathogen in the context of developing a vaccine delivery system will receive a different risk profile than one describing the same characteristics in the context of enhancing virulence.

Practical Applications: Who Should Use This Tool and How

The Biosecurity Risk Detection Algorithm GPT at https://chatgpt.com/g/g-darmv2aII-biosecurity-risk-detection-algorithm-gpt has immediate practical utility across a wide range of professional contexts.

| User Type | Application | Benefit |

|---|---|---|

| Biosafety Officers | Pre-submission screening of institutional research | Flags DURC before external publication |

| Journal Editors | Manuscript triage and risk flagging | Reduces editorial burden on sensitive submissions |

| Research Funders | Grant application review | Identifies dual-use potential before funding decisions |

| Intelligence Analysts | Open-source literature monitoring | Tracks emerging biological risk signals in published science |

| Policy Analysts | Regulatory gap analysis | Identifies research areas outpacing governance frameworks |

| Educators | Teaching biosecurity awareness | Demonstrates real-world risk identification to students |

For biosafety officers at research institutions, the tool offers a first-pass screening capability that can be applied to all outgoing publications, grant applications, and conference presentations — not just those that researchers self-identify as potentially sensitive. This is particularly important given research demonstrating that scientists themselves are often poor judges of the dual-use potential of their own work, not out of malice but because domain expertise can create blind spots to cross-domain risk implications.

_Limitations and the Importance of Human Oversight

No automated tool can replace human expert judgment in biosecurity risk assessment, and the Biosecurity Risk Detection Algorithm GPT is designed to augment rather than supplant human review. The tool's performance is bounded by the training data and policy frameworks on which its classifiers were built. Emerging research areas — synthetic biology applications that did not exist five years ago, novel gene drive architectures, or AI-designed proteins with no natural analogues — may not be well-represented in its risk models. Regular updates to the underlying models are essential to maintain coverage of the rapidly evolving life sciences landscape.

Additionally, the tool operates on text as submitted. It cannot assess laboratory practices, researcher intent, institutional biosafety culture, or the geopolitical context in which research is being conducted — all of which are relevant to a comprehensive biosecurity risk assessment. These limitations do not diminish the tool's value; they contextualise it. The Biosecurity Risk Detection Algorithm GPT is most powerful when integrated into a layered biosecurity governance system in which automated screening provides a consistent, scalable first pass and human experts focus their attention on the cases that automated tools flag for deeper review.

Conclusion: Building the Biosecurity Surveillance Infrastructure of the Future

The publication of dangerous research is not a hypothetical risk. The reconstruction of the 1918 influenza virus, the synthesis of poliovirus from scratch, and the H5N1 gain-of-function controversy are all documented cases in which published science raised serious biosecurity concerns — and in which the existing governance infrastructure was found to be inadequate. The Biosecurity Risk Detection Algorithm GPT represents a meaningful contribution to closing the gap between the pace of scientific publication and the capacity of biosecurity governance systems to keep up.

By making advanced NLP and machine learning-based biosecurity screening accessible to researchers, biosafety officers, journal editors, and policy analysts, the tool democratises a capability that has historically been available only to well-resourced national security agencies. In a world where the life sciences are advancing faster than any single institution can monitor, that democratisation is not merely convenient — it is essential.

Explore the tool directly at https://chatgpt.com/g/g-darmv2aII-biosecurity-risk-detection-algorithm-gpt and begin integrating AI-powered biosecurity screening into your research governance workflow today.

_References

- National Research Council. Biotechnology Research in an Age of Terrorism (Fink Report). National Academies Press, 2004.

- US Government Policy for Oversight of Life Sciences Dual Use Research of Concern (DURC Policy), 2012.

- World Health Organization. Responsible Life Sciences Research for Global Health Security. WHO, 2010.

- Biosecurity Risk Detection Algorithm GPT: https://chatgpt.com/g/g-darmv2aII-biosecurity-risk-detection-algorithm-gpt