The life sciences are drowning in data. Genomic sequencing technologies now generate terabytes of sequence data per instrument per day. Proteomics, metabolomics, and transcriptomics add layers of molecular complexity. Electronic health records, environmental monitoring systems, and biosurveillance networks contribute streams of clinical and ecological data. The challenge facing modern biology is no longer the acquisition of data — it is the organisation, interpretation, and operationalisation of data into knowledge that can guide decisions, drive discovery, and support governance.

Data informatics bioframeworks are the conceptual and technical architectures that make this transformation possible. They are the scaffolding upon which the edifice of modern biological science is increasingly built — and their design has profound implications for the quality, reproducibility, and utility of biological knowledge.

What Is a Data Informatics Bioframework?

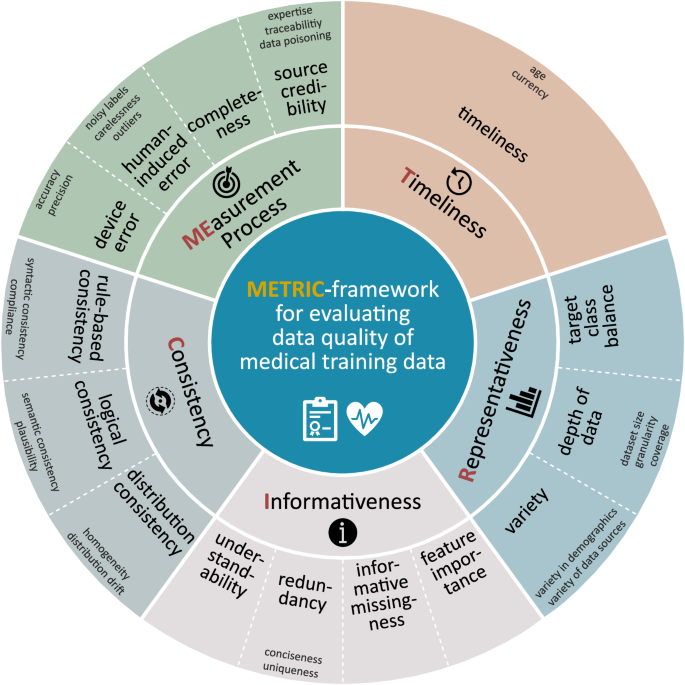

A data informatics bioframework is a structured system for the collection, organisation, annotation, integration, and analysis of biological data. It encompasses ontologies (formal representations of domain knowledge), data standards (shared formats and vocabularies that enable interoperability), analytical pipelines (automated workflows for processing and interpreting data), and governance protocols (rules for data access, sharing, and quality assurance).

The concept draws on several converging fields: bioinformatics, which applies computational methods to biological data; knowledge engineering, which develops formal representations of domain expertise; and data science, which provides the statistical and machine learning tools for pattern recognition and prediction. A well-designed bioframework does not merely store data — it structures it in ways that make it interpretable by both humans and machines, enabling the kind of automated reasoning and cross-dataset integration that is increasingly essential for large-scale biological research.

The Role of Ontologies

Ontologies are the intellectual backbone of data informatics bioframeworks. An ontology is a formal, machine-readable representation of the concepts and relationships within a domain — a structured vocabulary that enables different databases, research groups, and computational systems to communicate using a shared language. The Gene Ontology (GO), which provides standardised terms for describing gene function, cellular component, and biological process, is perhaps the most widely used biological ontology, with applications in genomics, drug discovery, and disease research across thousands of publications and databases.

In the context of biosafety and biosecurity, ontologies serve a particularly important function. The Biosafety Ontology (BSO) and related frameworks provide structured vocabularies for describing containment levels, risk groups, exposure routes, and regulatory requirements — enabling the automated integration of biosafety data across institutional and national boundaries. A biosafety ontology that is shared across regulatory agencies, research institutions, and international bodies can support the kind of harmonised, data-driven biosafety assessment that is increasingly necessary in a world of globalised research and cross-border biological threats.

Analytical Pipelines and Automation

The second major component of a data informatics bioframework is the analytical pipeline — the automated sequence of computational steps that transforms raw data into interpretable results. In genomics, a typical pipeline might include quality control of raw sequencing reads, alignment to a reference genome, variant calling, annotation, and functional interpretation. In biosurveillance, a pipeline might integrate environmental sampling data, pathogen sequence data, epidemiological records, and climate variables to generate risk predictions.

The design of robust, reproducible analytical pipelines is one of the central challenges of modern bioinformatics. The "reproducibility crisis" in science — the finding that a substantial proportion of published results cannot be independently replicated — has been partly attributed to the use of ad hoc, poorly documented computational workflows. Frameworks such as Snakemake, Nextflow, and the Common Workflow Language (CWL) address this problem by enabling the specification of pipelines in a standardised, portable format that can be shared, versioned, and executed consistently across different computational environments.

Integration with Machine Learning

The integration of machine learning into data informatics bioframeworks is transforming what is possible in biological data analysis. Where traditional statistical methods require explicit specification of the relationships to be tested, machine learning models can discover complex, non-linear patterns in high-dimensional data without prior specification — a capability that is particularly valuable in biology, where the relationships between genotype, phenotype, environment, and disease are rarely simple or fully understood.

In genomics, deep learning models have achieved human-level performance in tasks ranging from protein structure prediction (AlphaFold) to variant pathogenicity classification to the identification of regulatory elements in non-coding DNA. In drug discovery, graph neural networks are accelerating the identification of novel therapeutic compounds by learning the relationship between molecular structure and biological activity. In biosurveillance, recurrent neural networks and transformer models are being applied to the prediction of outbreak trajectories and the identification of emerging pathogens from metagenomic data.

The LLM-Enabling Knowledge Framework (LEKF) — a conceptual architecture developed to enhance the reasoning capabilities of large language models in scientific domains — represents a frontier application of bioframework thinking. By structuring domain knowledge in ways that are optimised for LLM consumption, LEKF-type frameworks can dramatically improve the quality and reliability of AI-generated scientific outputs, reducing hallucination and improving the depth of domain-specific reasoning.

Bioframeworks in Biosafety and Regulatory Science

The application of data informatics bioframeworks to biosafety and regulatory science is an area of growing importance. Regulatory agencies responsible for the oversight of GMOs, synthetic biology products, and novel biological agents face the challenge of making complex, data-intensive risk assessments under conditions of scientific uncertainty and time pressure. Bioframeworks that integrate genomic, phenotypic, ecological, and toxicological data — and that can generate structured, auditable risk assessments — have the potential to significantly improve both the quality and the efficiency of regulatory decision-making.

The development of AI-assisted regulatory tools — systems that can screen novel biological entities against databases of known hazards, identify data gaps, and generate preliminary risk assessments — is an active area of research and development. These tools do not replace human expert judgment; they augment it, freeing regulatory scientists to focus on the complex, context-dependent aspects of risk assessment that require genuine expertise and cannot be automated.

Building the Infrastructure for Biological Knowledge

The construction of robust data informatics bioframeworks is not merely a technical project — it is an institutional and political one. It requires sustained investment in data infrastructure, training of a new generation of bioinformaticians and data scientists, development of international data-sharing agreements, and the cultivation of a scientific culture that values data quality, documentation, and openness as highly as it values novel discoveries.

The payoff for this investment is substantial. A world in which biological data is well-organised, interoperable, and machine-interpretable is a world in which scientific discovery is faster, more reproducible, and more equitably distributed. It is a world in which biosafety assessments are more rigorous and more consistent. It is a world in which the promise of precision medicine, sustainable agriculture, and AI-driven biosecurity can be more fully realised.

Data informatics bioframeworks are, in this sense, not merely a technical infrastructure — they are a foundation for the future of biological science itself.