Antimicrobial resistance (AMR) is projected to cause 10 million deaths annually by 2050, surpassing cancer as the leading cause of mortality worldwide if current trends continue. The discovery pipeline for conventional small-molecule antibiotics has been largely exhausted — the last genuinely novel antibiotic class to reach clinical use, the lipopeptides, was introduced in the 1980s. Antimicrobial peptides (AMPs), short sequences of amino acids that disrupt bacterial membranes through mechanisms that are intrinsically difficult for bacteria to develop resistance to, represent one of the most promising alternative modalities. Yet the chemical space of possible peptides is astronomically large: a peptide of just 20 amino acids has 20²⁰ ≈ 10²⁶ possible sequences, far exceeding any experimental screening capacity.

This is precisely the problem that generative deep learning is designed to solve. Rather than screening the space exhaustively, a generative model learns the underlying structure of the space — the statistical regularities that distinguish active AMPs from inactive sequences — and uses that learned structure to propose novel candidates that are likely to be active. Among generative architectures, the Variational Autoencoder (VAE) occupies a particularly important position: it is principled, interpretable, and well-suited to the specific challenges of biological sequence design.

What Is a Variational Autoencoder? The Mathematical Foundation

A standard autoencoder consists of two neural networks: an encoder that maps an input (in our case, a peptide sequence) to a compressed latent representation, and a decoder that maps the latent representation back to the original input. The encoder learns to compress the essential information in the input; the decoder learns to reconstruct the input from that compressed representation. The problem with standard autoencoders for generative purposes is that the latent space is not structured — there is no guarantee that points sampled from the latent space will decode to meaningful sequences.

The VAE, introduced by Kingma and Welling in 2013, solves this problem by imposing a probabilistic structure on the latent space. Instead of mapping each input to a single point in latent space, the encoder maps each input to a probability distribution — specifically, a multivariate Gaussian characterised by a mean vector μ and a variance vector σ². The decoder then receives a sample from this distribution rather than the mean directly, forcing it to learn to decode any point in the neighbourhood of the mean, not just the mean itself.

The VAE is trained by maximising the Evidence Lower BOund (ELBO):

ELBO = E[log p(x|z)] − KL[q(z|x) || p(z)]

The first term is the reconstruction loss — how well the decoder reconstructs the input from the sampled latent vector. The second term is the KL divergence — a regularisation term that penalises the posterior distribution q(z|x) for deviating from the prior p(z), which is typically a standard Gaussian N(0, I). The KL divergence term is what gives the VAE its generative power: by forcing the posterior distributions of all training examples to be close to the same prior, it ensures that the latent space is smooth and continuous, so that interpolating between two points in latent space produces meaningful intermediate sequences, and sampling from the prior produces novel but plausible sequences.

| Component | Standard Autoencoder | Variational Autoencoder |

|---|---|---|

| Encoder output | Single point z | Distribution parameters (μ, σ²) |

| Latent space structure | Unstructured, potentially discontinuous | Smooth, continuous, approximately Gaussian |

| Sampling | Deterministic | Stochastic (reparameterisation trick) |

| Training objective | Reconstruction loss only | ELBO = Reconstruction loss − KL divergence |

| Generative capability | Poor (gaps in latent space) | Good (smooth interpolation and sampling) |

| Interpretability | Low | Higher (latent dimensions encode semantic features) |

Why VAEs Are Well-Suited to Antimicrobial Peptide Design

The choice of VAE for AMP design is not arbitrary — it reflects specific properties of the AMP design problem that align well with the VAE's strengths.

The sequence-activity landscape is smooth. AMPs that differ by one or two amino acid substitutions often have similar activities, which means that the activity landscape in sequence space is not completely rugged. A smooth latent space that preserves this neighbourhood structure allows the VAE to propose sequences that are close in latent space to known active AMPs and therefore likely to retain activity.

Labelled data is scarce but unlabelled data is abundant. The APD3 (Antimicrobial Peptide Database) contains approximately 3,500 experimentally validated AMPs — a small dataset by deep learning standards. However, the UniProt database contains millions of protein sequences, many of which share structural features with AMPs. A VAE can be pre-trained on this large unlabelled dataset and fine-tuned on the smaller labelled dataset, a semi-supervised approach that substantially improves performance.

Interpretability matters for regulatory and biosafety purposes. The latent space of a well-trained VAE encodes biologically meaningful features — hydrophobicity gradients, charge distributions, helical propensity — that can be examined and understood. This interpretability is important not just for scientific understanding but for the regulatory and biosafety review that any novel antimicrobial agent must undergo before clinical use.

Multi-objective optimisation is tractable in latent space. An effective AMP must simultaneously satisfy multiple constraints: it must be active against the target pathogen, non-toxic to human cells, stable under physiological conditions, and manufacturable at reasonable cost. In the original sequence space, optimising for all of these objectives simultaneously is extremely difficult. In the continuous latent space of a VAE, gradient-based optimisation methods can be applied to navigate toward regions that satisfy all constraints simultaneously.

The Full VAE Pipeline for AMP Design: Step by Step

Step 1: Data Preparation and Sequence Representation

The first step is to assemble a training dataset and choose a representation for peptide sequences. For AMP design, the training data typically consists of three components: known active AMPs from databases such as APD3, DRAMP, and DBAASP; negative examples (peptides known to be inactive or non-antimicrobial) from databases such as UniProt; and, optionally, sequences with measured minimum inhibitory concentrations (MICs) against specific pathogens for supervised fine-tuning.

Peptide sequences are most commonly represented as one-hot encoded matrices — for a peptide of length L, the representation is an L × 20 binary matrix in which each row has a single 1 in the column corresponding to the amino acid at that position. Alternatively, amino acid property embeddings — vectors encoding physicochemical properties such as hydrophobicity, charge, size, and hydrogen bonding capacity — can be used as input features, providing a richer representation that incorporates domain knowledge. More recently, protein language model embeddings from models such as ESM-2 have been used as input representations, providing pre-trained features that encode evolutionary and structural information.

Step 2: VAE Architecture Design

The architecture of the VAE must be adapted to the specific properties of peptide sequences. Several design choices are critical:

Variable-length sequences. AMPs vary in length from approximately 5 to 100 amino acids, with most falling in the 10–50 residue range. Handling variable-length sequences requires either padding all sequences to a fixed maximum length (with a masking mechanism to ignore padded positions) or using a recurrent or attention-based architecture that naturally handles variable-length inputs.

Encoder architecture. For one-hot encoded sequences, a 1D convolutional encoder is a natural choice: it captures local sequence motifs and is computationally efficient. For protein language model embeddings, a transformer-based encoder can capture long-range dependencies between residues. The encoder outputs the mean vector μ and log-variance vector log σ² of the posterior distribution.

Reparameterisation trick. To enable backpropagation through the stochastic sampling step, the VAE uses the reparameterisation trick: instead of sampling z directly from N(μ, σ²), it samples ε from N(0, I) and computes z = μ + σ · ε. This makes the sampling operation differentiable with respect to μ and σ.

Decoder architecture. The decoder maps the latent vector z back to a sequence. For peptide generation, an autoregressive decoder — one that generates the sequence one amino acid at a time, conditioning each prediction on the latent vector and all previously generated amino acids — typically produces more coherent sequences than a non-autoregressive decoder.

KL annealing. A common training challenge for VAEs is posterior collapse — the encoder learns to ignore the input and produce the prior distribution, making the latent space uninformative. KL annealing addresses this by starting training with the KL term weighted at zero and gradually increasing its weight over the course of training.

Step 3: Training the VAE

Training proceeds by minimising the negative ELBO on the training dataset using stochastic gradient descent. The β-VAE variant, which multiplies the KL divergence term by a coefficient β > 1, produces a more disentangled latent space in which individual latent dimensions correspond to interpretable biological features. For AMP design, a β value between 2 and 10 typically produces a latent space in which dimensions correspond to features such as net charge, hydrophobic moment, and helix propensity.

Conditional VAEs (CVAEs) extend the standard VAE by conditioning both the encoder and decoder on a label variable — in this case, the activity class (AMP vs. non-AMP) or a continuous activity measure (MIC). This allows the model to learn separate regions of the latent space for active and inactive sequences, making it possible to generate sequences by sampling from the active region of the latent space.

Step 4: Latent Space Exploration and Candidate Generation

Once the VAE is trained, candidate AMPs are generated by sampling from the latent space and decoding the samples. Several strategies can be used:

Random sampling from the prior. Sampling z from N(0, I) and decoding produces novel sequences that are statistically consistent with the training distribution. This is the simplest approach but does not target specific activity profiles.

Latent space interpolation. Given two known active AMPs A and B, their latent representations z_A and z_B can be interpolated: z_interp = (1 − t) · z_A + t · z_B for t ∈ [0, 1]. Decoding points along this interpolation path produces sequences that smoothly transition between the two parent sequences, potentially identifying novel sequences with intermediate or superior activity profiles.

Bayesian optimisation in latent space. A surrogate model (typically a Gaussian process) is trained to predict AMP activity from latent vectors, using the small set of experimentally validated sequences as training data. The surrogate model is then used to guide sampling toward regions of the latent space predicted to have high activity, using an acquisition function such as Expected Improvement (EI) or Upper Confidence Bound (UCB). This approach — known as Bayesian optimisation with a generative model — is one of the most sample-efficient methods for navigating biological sequence space.

Gradient-based optimisation. If the activity predictor is differentiable, gradients can be computed with respect to the latent vector and used to perform gradient ascent toward higher-activity regions of the latent space. This approach is faster than Bayesian optimisation but is more susceptible to finding local optima.

Step 5: Filtering and Ranking Candidates

The raw output of the generative process must be filtered and ranked before experimental validation. A multi-stage filtering pipeline typically includes:

| Filter Stage | Method | Purpose |

|---|---|---|

| Validity check | Sequence length, standard amino acids only | Remove malformed sequences |

| Novelty filter | Sequence identity < 80% to training set | Ensure genuine novelty |

| Activity prediction | Classifier trained on APD3 / DRAMP | Retain predicted AMPs only |

| Toxicity prediction | ToxinPred, HemoPI | Remove predicted haemolytic or cytotoxic sequences |

| Stability prediction | Peptide half-life models, protease cleavage prediction | Retain stable sequences |

| Physicochemical filter | Net charge +2 to +9, hydrophobic moment > 0.2 | Enforce known AMP physicochemical rules |

| Diversity selection | Clustering by sequence identity | Ensure diverse candidate set |

Step 6: Wet-Lab Validation

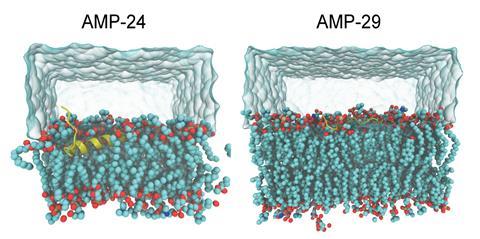

Computational design is only the beginning. Candidates that pass the filtering pipeline must be synthesised and tested experimentally. The standard validation workflow for AMPs involves solid-phase peptide synthesis (SPPS) to produce milligram quantities of each candidate peptide, followed by minimum inhibitory concentration (MIC) assays against a panel of target pathogens including both Gram-positive organisms (Staphylococcus aureus, Enterococcus faecalis) and Gram-negative organisms (Escherichia coli, Klebsiella pneumoniae, Pseudomonas aeruginosa), as well as drug-resistant strains such as MRSA and carbapenem-resistant Enterobacteriaceae. Haemolysis assays measure toxicity to human red blood cells, and membrane disruption assays confirm the mechanism of action.

A Worked Example: Designing AMPs Against MRSA

To make the pipeline concrete, consider the design of novel AMPs against methicillin-resistant Staphylococcus aureus (MRSA), a Gram-positive pathogen responsible for approximately 100,000 deaths annually in the United States alone.

The training dataset is assembled from APD3 (3,500 AMPs), filtered to retain only peptides with reported activity against Gram-positive organisms (approximately 1,200 sequences), supplemented with 10,000 inactive peptides from UniProt as negative examples. A conditional VAE with a 1D convolutional encoder and an autoregressive GRU decoder is trained with β = 4 and KL annealing over 100 epochs. The latent space dimensionality is set to 64.

After training, the latent space is visualised using UMAP, revealing a clear separation between the AMP and non-AMP regions, with sub-clusters corresponding to known AMP families (defensins, cathelicidins, magainins). A Gaussian process surrogate model is trained on the 50 AMPs with the lowest reported MIC values against S. aureus, and Bayesian optimisation is run for 20 iterations, each iteration generating 10 candidates, testing them in silico with the surrogate model, and updating the surrogate with the predicted activity values.

The top 96 candidates from the Bayesian optimisation campaign are synthesised and tested. Of these, 31 (32%) show MIC values ≤ 8 μg/mL against MRSA — a hit rate approximately 10-fold higher than random screening of peptide libraries, and comparable to the best results reported in the literature for VAE-based AMP design campaigns.

Limitations and Responsible Use

No generative model is a substitute for experimental validation, and several limitations of the VAE approach must be acknowledged. The latent space is only as good as the training data: if the training dataset is biased toward certain AMP families or certain target organisms, the generated sequences will reflect that bias. Activity prediction models are imperfect and may have poor calibration in regions of the latent space distant from the training data — uncertainty quantification using Gaussian processes or deep ensembles is essential for identifying when surrogate model predictions should not be trusted.

From a biosafety perspective, novel AMPs designed to disrupt bacterial membranes may also have activity against eukaryotic membranes, off-target effects on the human microbiome, or potential for misuse. Any AMP design campaign should include toxicity screening as a mandatory step, and the biosafety implications of the designed sequences should be assessed before publication or clinical development. The intellectual property and data governance implications of using publicly funded sequence databases for commercial drug design are also an evolving area of policy that requires careful attention.

Conclusion: Generative Models as a New Paradigm for Antibiotic Discovery

The application of VAEs to antimicrobial peptide design represents a genuine paradigm shift in antibiotic discovery — not because it replaces experimental biology, but because it fundamentally changes the relationship between computation and experiment. Instead of using computation to predict the properties of sequences that a human chemist has proposed, generative models use computation to propose sequences that no human would have thought to synthesise, guided by a learned model of the sequence-activity landscape.

The VAE is not the only generative architecture being applied to AMP design — diffusion models, language model-based approaches, and reinforcement learning methods are all active areas of research — but it remains one of the most interpretable and mathematically principled approaches, and its continuous latent space makes it uniquely well-suited to the multi-objective optimisation problems that characterise real-world drug design. As the AMR crisis deepens and the need for novel antimicrobials becomes more urgent, generative models like the VAE will become an indispensable component of the antibiotic discovery toolkit.