The Paper That Could End the World

In April 2026, Anthropic announced that it had built an AI model it considered too dangerous to release to the public. The company activated its highest safety tier and updated its Preparedness Framework — acknowledging that the model's capabilities in biology crossed a threshold that existing governance frameworks were not equipped to handle. The announcement prompted a wave of commentary about AI biosecurity risks, but it also drew attention to a parallel problem that has existed for decades: the scientific literature itself.

Every year, thousands of peer-reviewed papers are published in journals like Science, Nature, and Cell that contain detailed methodologies for enhancing pathogen transmissibility, increasing virulence, or circumventing immune defences. These papers are written by legitimate researchers pursuing legitimate scientific goals. They are reviewed by expert peers, approved by institutional biosafety committees, and published openly for the scientific community to build upon. They are also, in many cases, step-by-step instructions for recreating some of the most dangerous biological agents known to science.

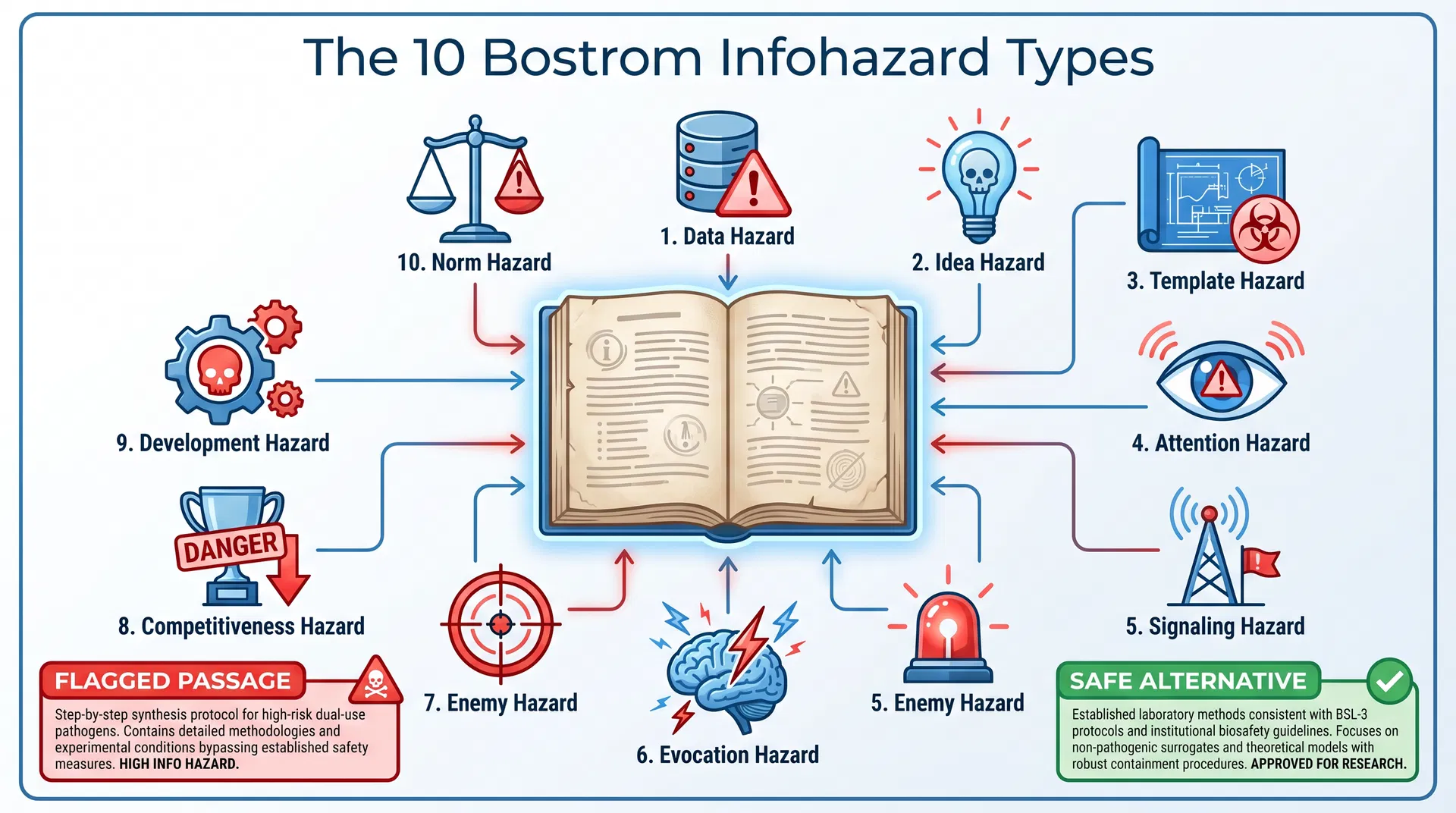

This is the infohazard problem: information that is valuable for science can simultaneously be dangerous if widely disseminated. Nick Bostrom, the Oxford philosopher who coined the term, identified ten distinct types of infohazard — and the scientific literature is riddled with all of them.

The 10 Bostrom Infohazard Types

BioScreens' Infohazard Analysis tool at bioscreens.org is built around Bostrom's complete taxonomy. When a researcher, science communicator, or journal editor uploads a manuscript, the AI engine classifies each passage across all ten infohazard types:

| Infohazard Type | Definition | Example in Scientific Literature |

|---|---|---|

| Data Hazard | Raw data that enables harm | Lethality statistics at specific doses |

| Idea Hazard | A concept that enables harm | Airborne transmission mechanism |

| Template Hazard | Step-by-step instructions | Synthesis protocol for a select agent |

| Attention Hazard | Drawing attention to a dangerous possibility | "Our findings suggest this is achievable" |

| Signaling Hazard | Signalling capability to adversaries | Publication of successful enhancement |

| Evocation Hazard | Triggering harmful associations | Detailed pathogen imagery or description |

| Enemy Hazard | Providing adversaries with targeting information | Geographic vulnerability data |

| Competitiveness Hazard | Triggering a dangerous race | "First group to achieve X will gain advantage" |

| Development Hazard | Accelerating harmful capability development | Efficiency improvements in dangerous processes |

| Norm Hazard | Normalising dangerous practices | Treating gain-of-function as routine |

The platform assigns each flagged passage a severity rating — Low, Medium, High, or Critical — and generates a safer science communication alternative. For a passage like "We synthesized the pathogen using the following step-by-step protocol," the safe alternative might read: "We characterised the pathogen using established laboratory methods consistent with BSL-3 protocols." The scientific finding is preserved; the operational detail that could enable harm is removed.

Infographic: BioScreens maps every flagged passage to one of Nick Bostrom's 10 infohazard types and generates a safe science communication alternative.

The Stanford AI Virus Designs

The urgency of the infohazard problem became concrete in late April 2026, when Stanford researchers reported that AI-designed virus sequences had crossed from theoretical models into laboratory reality. The AI had generated novel sequences with functional similarity to known pathogens but low sequence similarity — meaning they would likely pass standard DNA synthesis screening checks. The sequences were not published in full, but the research demonstrated that the gap between computational design and physical synthesis is narrowing faster than biosecurity frameworks can adapt.

This development directly implicates the infohazard problem. The Stanford paper itself — describing the methodology by which AI can design evasion sequences — is a potential infohazard. Publishing the methodology in sufficient detail for peer review creates a template that could be used by actors who do not share the researchers' safety commitments. The BioScreens Infohazard Analysis tool is designed to help researchers and editors navigate exactly this tension: how to communicate scientific findings without providing the operational specificity that transforms a paper into a threat.

The Anthropic Precedent

Anthropic's decision to withhold its dangerous AI model from release was notable not only for what it revealed about AI capabilities but for the governance framework it invoked. The company explicitly referenced the Dual Use Research of Concern framework — DURC — as the appropriate lens for evaluating whether to release a model with biological design capabilities. This is a significant moment: a leading AI company acknowledging that the biosecurity frameworks developed for wet-lab research apply equally to AI-generated biological knowledge.

The implication for scientific publishing is direct. If an AI model that can design dangerous biological sequences is subject to DURC review before release, then a scientific paper that describes how to design dangerous biological sequences should be subject to infohazard review before publication. BioScreens provides the tool to conduct that review — and to generate a safe public communication version of the manuscript that can be published without the hazardous specificity.

Practical Implementation for Researchers and Editors

BioScreens' Infohazard Analysis tool accepts text pasted directly into the platform or uploaded as a .txt or .md file, up to 50,000 characters. The analysis runs in seconds and returns a passage-level severity breakdown, a complete safe public communication version of the manuscript summary, and a downloadable PDF report suitable for submission to an ethics board or journal editor.

For science communicators writing press releases, lay summaries, or public outreach materials based on high-risk research, the tool provides an essential safety check. A press release that accurately summarises a gain-of-function study but inadvertently includes the specific mutation that conferred enhanced transmissibility is a Template Hazard — even if the original paper was appropriately restricted. BioScreens catches this before the press release is distributed.

The platform is free to access at bioscreens.org. For institutions seeking to integrate infohazard review into their publication workflows, BioScreens also offers API access and institutional licensing for universities, research institutes, and biosafety committees.

The Responsibility of Scientific Communication

The infohazard problem does not have a simple solution. Science advances through the open exchange of information, and restricting that exchange carries real costs for human knowledge and welfare. But the 2026 landscape — in which AI models can autonomously design and run thousands of biological experiments, in which AI-designed sequences can evade standard screening, and in which a single paper can provide a roadmap for recreating a pandemic pathogen — demands a more sophisticated approach to scientific communication than "publish everything."

BioScreens Infohazard Analysis is not a censorship tool. It is a translation tool: helping researchers communicate what they found without communicating how to replicate the most dangerous aspects of how they found it. In a world where the line between scientific knowledge and security threat is increasingly thin, that translation service may be one of the most important contributions biosecurity technology can make.