Designing Interventions to Reduce Catastrophic Risks from AI-Enabled Biotechnology

Designing Interventions to Reduce Catastrophic Risks from AI-Enabled Biotechnology

The convergence of artificial intelligence and biotechnology is one of the most consequential technological developments of the early twenty-first century. It promises to accelerate drug discovery, enable personalised medicine, and transform our understanding of living systems. It also introduces a category of risk that demands serious, sustained attention: the possibility that AI tools could dramatically lower the barriers to engineering dangerous biological agents, enabling catastrophic harm at a scale previously achievable only by state-level actors with sophisticated laboratory infrastructure.

This is not a hypothetical concern. In 2022, researchers at Collaborations Pharmaceuticals demonstrated that a generative AI model originally designed to discover therapeutic compounds could, when its objective function was inverted, generate tens of thousands of candidate chemical warfare agents in under six hours — including molecules more toxic than VX nerve agent. The experiment was conducted responsibly, with appropriate safeguards, and published as a warning. But it illustrated, with uncomfortable clarity, that the same AI capabilities being developed for beneficial purposes carry inherent dual-use potential.

The Risk Landscape

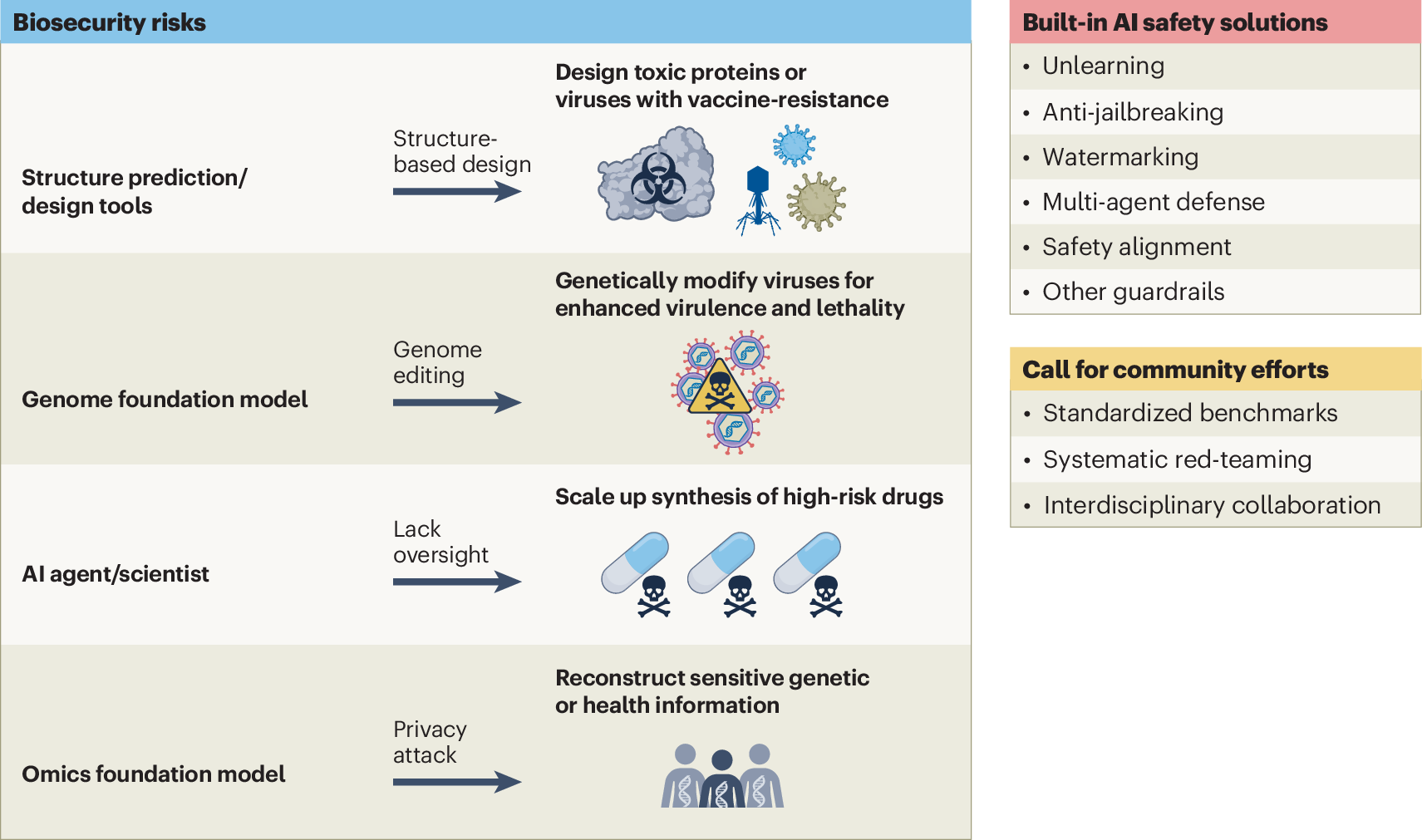

Understanding the catastrophic risk landscape from AI-enabled biotechnology requires distinguishing between different threat vectors, actors, and capability thresholds.

| Threat Vector | Current Barrier | AI Impact | Risk Horizon |

|---|---|---|---|

| Pathogen enhancement (gain-of-function) | Requires wet-lab expertise, BSL-3/4 access | Lowers design barrier; predicts enhancement strategies | Near-term (2–5 years) |

| De novo pathogen synthesis | Requires advanced synthetic biology expertise | Dramatically lowers design barrier with generative models | Medium-term (5–10 years) |

| Targeted bioweapons (genetic) | Requires population genomics expertise | AI enables targeting based on genetic markers | Medium-term (5–10 years) |

| Ecosystem disruption (gene drives) | Requires ecological modelling expertise | AI accelerates drive design and spread prediction | Near-term (2–5 years) |

| Aerosolisation and delivery optimisation | Requires engineering expertise | AI could assist with delivery system design | Near-term (2–5 years) |

The most immediate concern is not the creation of entirely novel pathogens — which remains technically demanding even with AI assistance — but the enhancement of existing pathogens and the lowering of barriers to accessing dangerous biological knowledge. Large language models trained on scientific literature can already synthesise detailed protocols for dangerous experiments from publicly available sources, and this capability will only improve.

A Framework for Intervention Design

Effective interventions must operate across multiple layers simultaneously. No single measure is sufficient; the goal is to create a layered defence system in which the failure of any one layer does not result in catastrophic outcomes.

Layer 1: Capability Restriction at the AI Model Level. The most upstream intervention is restricting the development and deployment of AI models with the highest dual-use potential. This includes biosecurity fine-tuning and refusal training — training AI models to refuse requests for information that could facilitate the creation of dangerous biological agents. Tiered access to frontier biological AI models restricts the most capable models to vetted researchers operating under institutional biosafety oversight, mirroring the existing framework for select agent research. Red-teaming and adversarial evaluation must be mandated for models with significant biological capabilities.

Layer 2: Monitoring and Detection at the Infrastructure Level. Even if upstream capability restrictions are imperfect, downstream monitoring can detect and disrupt malicious actors before they cause harm. DNA synthesis screening must be made mandatory and comprehensive, covering all commercial DNA synthesis orders against an authoritative database of dangerous sequences. Biosurveillance network expansion requires a global network of genomic surveillance systems capable of identifying unusual pathogen sequences in clinical, environmental, and agricultural samples.

Layer 3: Governance and Norms at the Institutional and International Level. Mandatory biosecurity review for dual-use AI research — analogous to the existing institutional biosafety committee (IBC) framework — would create accountability at the institutional level. The Biological Weapons Convention (BWC), negotiated in 1972 and lacking a verification mechanism, must be updated to address AI-enabled biotechnology. Norms development within the AI research community, extending existing responsible disclosure commitments to specifically address biosecurity, would complement regulatory frameworks.

Layer 4: Resilience and Response Capacity. Building resilience requires investment in rapid vaccine and therapeutic development platforms, medical countermeasure stockpiling, and global health system strengthening. The most important resilience measure is a well-funded, well-staffed global health system capable of detecting, containing, and responding to biological threats — particularly in low- and middle-income countries where surveillance and response capacity is most limited.

The Role of the Biosecurity Community

Designing and implementing these interventions requires genuine expertise in both biology and biosecurity — a combination that is rare and must be actively cultivated. Biosecurity experts must engage directly with AI developers to ensure that biosecurity considerations are incorporated into model design, training data curation, and deployment decisions from the outset. The current practice of consulting biosecurity experts after a model has been built is insufficient.

Biosecurity researchers must develop quantitative frameworks for assessing the dual-use risk of specific AI capabilities — analogous to the risk assessment frameworks used in biosafety level classification. This requires empirical research into what AI tools can actually do, not just what they theoretically could do. The biosecurity community must also engage with policymakers to translate technical risk assessments into actionable policy recommendations.

Conclusion

The catastrophic risks from AI-enabled biotechnology are real, near-term, and addressable — but only if the response is commensurate with the threat. Piecemeal, reactive regulation will not be sufficient. What is needed is a comprehensive, layered intervention framework that operates simultaneously at the level of AI model development, laboratory infrastructure, institutional governance, and international coordination. The window for proactive action is open, but it will not remain open indefinitely. The time to build these defences is now, before the capability thresholds that make catastrophic misuse straightforward have been crossed.