Introduction: The Communication Revolution Scientists Did Not See Coming

Science has always had a communication problem. The gap between what researchers know and what the public understands has persisted for decades — not for lack of effort, but because the channels, formats, and incentives of traditional science communication were never designed for speed, scale, or genuine accessibility. Peer-reviewed journals, press releases, and conference proceedings served the scientific community well, but they were never built for the age of the scroll, the voice query, or the AI-generated summary.

That age has now arrived. And it is arriving faster than most scientific institutions are prepared for.

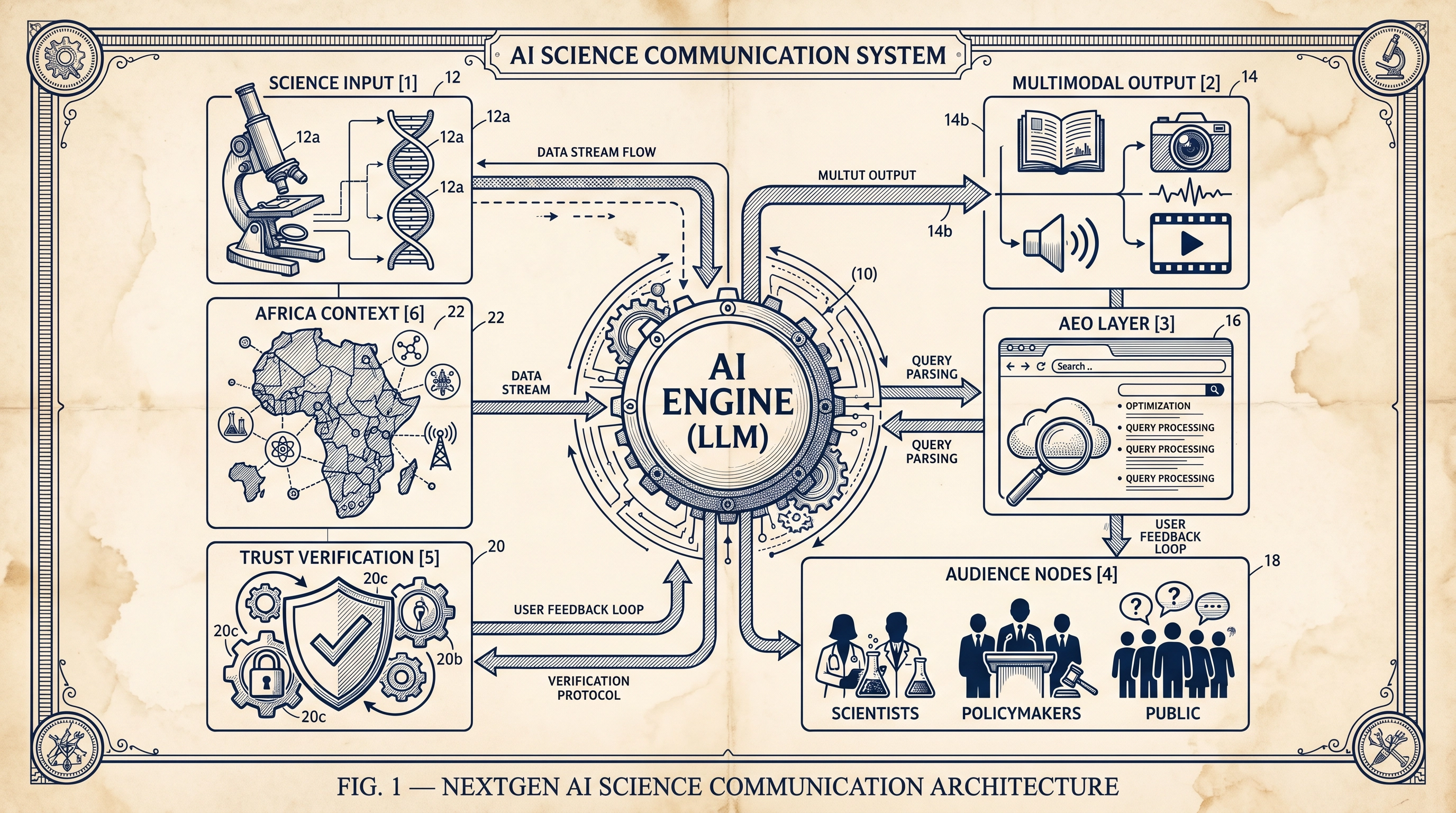

The emergence of large language models (LLMs) such as ChatGPT, Claude, and Gemini — alongside multimodal AI systems capable of generating text, images, audio, and video — has created what scholars are beginning to call the NextGen AI Science Communication paradigm. This is not simply a matter of scientists using AI to write better abstracts or create infographics more efficiently. It represents a structural transformation in how scientific knowledge is produced, packaged, distributed, discovered, and trusted. A 2025 roadmap published in the Journal of Science Communication identified four critical research frontiers: communication about AI, communication with AI, the impact of AI on science communication ecosystems, and AI's influence on the theoretical and methodological foundations of the field itself.1

For scientists working at the intersection of biosafety, biosecurity, biotechnology, and public health — particularly those operating in the African context — this transformation is not a distant horizon. It is the present operational environment.

What "NextGen" Actually Means: Beyond Content Generation

The term "NextGen AI Science Communication" is sometimes used loosely to mean little more than using ChatGPT to draft a press release. That misses the point entirely. The NextGen paradigm encompasses at least five distinct and interrelated shifts.

First, AI as a communicative agent. AI systems are no longer passive tools that scientists use to produce content. They are increasingly active agents in the communication chain — answering questions, synthesising research, generating explanations, and shaping public understanding of science without any human scientist being directly involved. When a member of the public asks Perplexity AI or Google's AI Overviews about the safety of a genetically modified crop, the response they receive is generated by a model trained on a corpus of scientific and non-scientific text, curated by algorithmic processes that no individual scientist controls. The scientist's published research may — or may not — be represented accurately in that response.

Second, the collapse of the intermediary layer. Traditional science communication relied on a chain of intermediaries: journal editors, science journalists, press officers, and broadcasters who translated research for public audiences. AI is compressing or bypassing this chain. A 2024 study published in PNAS Nexus found that AI-generated summaries of scientific research were perceived as more trustworthy by lay readers and led to better information recall than summaries written by scientists themselves.2 This finding is simultaneously encouraging and alarming: encouraging because it suggests AI can improve accessibility; alarming because it raises deep questions about accuracy, bias, and the displacement of trained science communicators.

Third, the multimodal turn. NextGen science communication is not text-only. AI systems can now generate scientifically-themed infographics, explainer videos, podcast scripts, interactive data visualisations, and even synthetic voices reading research summaries in multiple languages. For scientists in Africa communicating to multilingual, multi-literacy audiences, this multimodal capability is transformative. Platforms like Gubbi Labs have demonstrated how generative AI and LLMs can simplify complex research and produce science stories in regional languages — making science accessible to audiences that were previously entirely excluded from the conversation.3

Fourth, the rise of Answer Engine Optimisation (AEO). The way people discover scientific information has shifted from keyword-based search to query-based AI responses. When a policymaker in Nairobi asks an AI assistant about biosafety regulations for gene-edited crops, they are not reading a list of ten blue links — they are receiving a synthesised answer generated by a model. Scientists and science communicators who do not structure their content to be retrievable and accurately represented by these AI answer engines risk being invisible in the most consequential information environments of the next decade. AEO — the practice of structuring content so that AI systems can accurately extract, cite, and represent it — is becoming as important as traditional SEO for scientific visibility.4

Fifth, AI-generated misinformation at scale. The same capabilities that make AI a powerful tool for science communication also make it a vector for what researchers have called "wrongness at scale." Generative AI systems can produce plausible-sounding but factually incorrect scientific content with remarkable fluency. In biosecurity contexts — where misinformation about pathogen risks, vaccine safety, or dual-use research can have life-or-death consequences — this is not an abstract concern. It is an active threat that requires scientists to be not only producers of accurate content but active monitors of the AI-mediated information environment.

The African Dimension: Opportunity, Exclusion, and Responsibility

Any serious discussion of NextGen AI Science Communication must grapple with its uneven geography. The research literature on AI and science communication remains heavily concentrated in Western contexts — the United States, Germany, Australia, and a handful of East Asian nations.1 Africa, which is home to some of the world's most pressing biosafety, biosecurity, and public health challenges, is largely absent from this literature as both a subject and a contributor.

This absence is not merely an academic gap. It has practical consequences. AI language models are trained predominantly on English-language, Western-produced text. When these models generate scientific explanations for African audiences — about malaria vaccine trials, mycotoxin contamination in food systems, or biosafety regulations for genetically modified organisms — they draw on a corpus that may not reflect African scientific knowledge, regulatory frameworks, or cultural contexts. The result can be explanations that are technically accurate in a Western frame but contextually misleading or irrelevant in an African one.

At the same time, the opportunity is real. Africa's scientific community is growing rapidly, and AI tools offer a genuine pathway to amplifying African scientific voices on the global stage. Scientists who master NextGen AI SciComm — who understand how to structure their research for AI retrieval, how to use multimodal AI to reach multilingual audiences, and how to monitor and correct AI-generated misinformation in their domains — will have a disproportionate impact on how African science is understood and valued globally.

For biosecurity professionals in particular, the stakes are especially high. The intersection of AI and biosecurity in Africa is a domain where communication failures can translate directly into policy failures, funding gaps, and preparedness deficits. Communicating biosecurity science effectively — to policymakers, to the public, and to international partners — is not a soft skill. It is a core professional competency.

The NextGen AI SciComm Toolkit: A Practical Framework

For scientists and science communicators navigating this new landscape, the following framework organises the key tools and strategies of NextGen AI Science Communication.

| Layer | What It Means | Key Tools & Approaches |

|---|---|---|

| Content Production | Using AI to draft, edit, and translate scientific content | ChatGPT, Claude, Gemini, Grammarly, DeepL |

| Multimodal Communication | Generating infographics, videos, podcasts, and visual explainers | DALL·E, Midjourney, Runway, ElevenLabs, Canva AI |

| AEO / LLMO | Structuring content for retrieval by AI answer engines and LLMs | Schema markup, FAQ sections, structured data, citation-dense writing |

| Audience Targeting | Reaching specific communities through AI-powered distribution | LinkedIn AI, Substack, Beehiiv, targeted social amplification |

| Misinformation Monitoring | Tracking and correcting AI-generated errors about your research domain | Google Alerts, Perplexity monitoring, manual AI query testing |

| Accessibility & Localisation | Translating and adapting content for multilingual, multi-literacy audiences | AI translation tools, regional language models, audio summaries |

The most important insight from this framework is that NextGen AI SciComm is not a single activity — it is a layered practice. Scientists who engage only at the content production layer (using AI to write better) while neglecting AEO, misinformation monitoring, and accessibility will find that their well-crafted content remains invisible or misrepresented in the AI-mediated information environments where their audiences increasingly live.

Trust, Accuracy, and the Ethics of AI-Mediated Science

The question of trust sits at the heart of the NextGen AI SciComm challenge. Science communication has always been, at its core, a trust-building enterprise. Scientists communicate not just to inform but to establish credibility, demonstrate transparency, and invite scrutiny. AI-mediated communication introduces new complications into this trust architecture.

When an AI system generates a summary of a scientific paper, it does not carry the reputational accountability of the scientist who wrote it. It cannot be questioned at a press conference, corrected in a follow-up study, or held responsible for a misleading framing. The scientist's name may appear in a citation, but the communicative act — the explanation that shapes public understanding — belongs to the model. This is a profound shift in the accountability structure of science communication, and it is one that the scientific community has not yet fully reckoned with.

There are also legitimate concerns about bias. AI systems trained on predominantly Western, English-language corpora will systematically underrepresent African scientific knowledge, indigenous ecological knowledge, and non-Western regulatory frameworks. For scientists working on biosafety governance in Africa — where the regulatory landscape, the ecological context, and the political economy of biotechnology are fundamentally different from those in Europe or North America — this bias is not a minor inconvenience. It is a structural distortion that shapes how African biosafety science is understood and acted upon by global audiences.

The ethical imperative for scientists in this environment is clear: engage actively with AI-mediated communication, not as passive subjects but as active architects. This means publishing in formats that AI systems can accurately retrieve and represent. It means monitoring AI-generated content in your domain and correcting errors through authoritative channels. It means contributing to the development of AI training corpora that include African scientific knowledge. And it means advocating for AI governance frameworks that require transparency about the sources and limitations of AI-generated scientific content.

Biosecurity Science Communication in the AI Era: A Special Case

Biosecurity science occupies a uniquely sensitive position in the NextGen AI SciComm landscape. Unlike most scientific domains, biosecurity involves information that can be genuinely dangerous if communicated without appropriate care — details about pathogen characteristics, vulnerability assessments, or dual-use research findings that could inform malicious actors as readily as they inform policymakers and public health professionals.

The challenge is not new. Scientists and biosecurity professionals have long navigated the tension between transparency and security in their communication practices. What AI introduces is a new dimension to this tension: the possibility that AI systems will extract, synthesise, and redistribute sensitive biosecurity information in ways that circumvent the careful judgements that human communicators make about what to share, with whom, and in what form.

At the same time, AI offers powerful tools for responsible biosecurity communication. Automated monitoring systems can detect the spread of biosecurity misinformation across social media platforms. AI-powered translation tools can make biosecurity guidance accessible to communities in multiple languages. Multimodal AI can generate clear, accurate visual explanations of biosafety protocols for audiences with limited scientific literacy. The question is not whether to use AI in biosecurity communication — it is how to use it responsibly, with appropriate safeguards and governance frameworks.

Key Takeaways

The NextGen AI Science Communication paradigm represents one of the most significant shifts in the history of science communication. For scientists, biosecurity professionals, and science communicators — particularly those working in Africa and other under-represented regions — the implications are both challenging and full of opportunity.

The scientists who will have the greatest impact in this new environment are not necessarily those who produce the most research. They are those who understand how AI systems discover, represent, and distribute scientific knowledge — and who actively shape that process rather than leaving it to algorithmic chance. This requires a new set of competencies: AEO literacy, multimodal content production, AI misinformation monitoring, and a deep understanding of the trust architectures that govern how AI-mediated scientific information is received by diverse audiences.

The transition to NextGen AI Science Communication is not optional. It is already underway. The question is whether scientists will engage with it proactively — shaping the AI-mediated information environment in ways that advance accurate, accessible, and equitable science communication — or whether they will remain passive observers as AI systems increasingly define how their work is understood by the world.

Frequently Asked Questions

What is NextGen AI Science Communication? NextGen AI Science Communication refers to the emerging paradigm in which artificial intelligence systems — including large language models, multimodal AI, and AI answer engines — are active agents in the production, distribution, and reception of scientific information, rather than merely passive tools used by human communicators.

How does AI change the way scientists communicate their research? AI changes science communication in multiple ways: it enables faster, more accessible content production; it creates new channels (AI answer engines, voice assistants) through which audiences discover scientific information; it introduces new risks of misinformation at scale; and it shifts the accountability structure of science communication by inserting AI systems as intermediaries between scientists and their audiences.

What is Answer Engine Optimisation (AEO) and why does it matter for scientists? AEO is the practice of structuring scientific content so that AI answer engines — such as ChatGPT, Perplexity, and Google's AI Overviews — can accurately retrieve, cite, and represent it in their responses. As more people use AI assistants to answer scientific questions, scientists who do not optimise their content for AI retrieval risk being invisible or misrepresented in the most important information environments of the coming decade.

What are the specific challenges for African scientists in the AI science communication landscape? African scientists face specific challenges including: AI models trained predominantly on Western, English-language text that may misrepresent African scientific contexts; limited representation of African scientific knowledge in AI training corpora; and the risk that AI-generated content about African biosafety, public health, and biotechnology issues will reflect Western frames rather than African realities. At the same time, AI offers significant opportunities for multilingual communication and global amplification of African scientific voices.

How should biosecurity scientists approach AI-mediated communication? Biosecurity scientists should engage actively with AI-mediated communication while maintaining appropriate safeguards around sensitive information. This includes monitoring AI-generated content in their domain for accuracy, contributing to responsible AI governance frameworks, using AI tools to improve the accessibility and reach of biosecurity guidance, and advocating for transparency requirements in AI systems that handle biosecurity-relevant information.

References

Footnotes

-

Kessler, S. H., Mahl, D., Schäfer, M. S., & Volk, S. C. (2025). All Eyez on AI: a roadmap for science communication research in the age of Artificial Intelligence. Journal of Science Communication, 24(02), Y01. https://jcom.sissa.it/article/pubid/JCOM_2402_2025_Y01/ ↩ ↩2

-

Spitale, G., Biller-Andorno, N., & Germani, F. (2024). AI model GPT-4 outperforms human experts in science communication. PNAS Nexus. Referenced in: May, I. C. (2025). Science Communication in the Age of GenAI: Between Trust, Truth, and Transformation. The Elm, University of Maryland, Baltimore. https://elm.umaryland.edu/elm-stories/2025/Science-Communication-in-the-Age-of-GenAI-Between-Trust-Truth-and-Transformation.php ↩

-

Gubbi Labs. (2025). How AI is helping to amplify science communication. Journalism AI. https://www.journalismai.info/blog/t29vospm0b7qeotwpc7okd5m0bzbti ↩

-

LaVoie Health Science. (2025). GEO Is the New SEO: How Generative Artificial Intelligence Is Transforming Health and Science Visibility. https://lavoiehealthscience.com/geo-is-the-new-seo-how-generative-artificial-intelligence-ai-is-transforming-health-and-science-visibility/ ↩