For nearly a century, the drug development pipeline has depended on live animal testing as the primary gateway to human clinical trials. The Federal Food, Drug, and Cosmetics Act of 1938 codified this requirement, mandating that every new drug candidate be validated in animal models before it could be administered to a single human subject. That paradigm is now being dismantled — not by regulation alone, but by the emergence of AI-powered, genetically-engineered in silico animal models that are demonstrably more predictive, faster, and ethically defensible than their biological counterparts.

The Legislative Turning Point

On December 29, 2022, President Biden signed the FDA Modernization Act 2.0 into law, formally removing the statutory requirement for animal testing in new drug applications. The legislation recognises that recent advancements in science have begun to offer increasingly viable alternatives to animal testing, and it opens the regulatory pathway for computational, organoid-based, and organ-on-chip approaches to serve as primary evidence in drug safety submissions.

This was not a symbolic gesture. The FDA followed in April 2025 with an announcement to phase out animal testing requirements for monoclonal antibodies and other drugs, promoting instead the use of lab-grown human organoids, organ-on-chip systems, and — critically — AI-driven in silico models. A March 2026 draft guidance further advanced this commitment, describing a framework for scientifically rigorous non-animal methods. The regulatory infrastructure for the post-animal era is now being actively constructed.

Why Animal Models Have Been Failing

The failure rate of drug candidates that succeed in animal models but fail in human clinical trials has been a persistent crisis in pharmaceutical development. Approximately 90% of drugs that pass preclinical animal testing fail in human trials, with safety and efficacy failures accounting for the majority of attrition. The biological distance between rodents and humans — in receptor pharmacology, metabolic pathways, immune architecture, and genetic background — is the fundamental source of this translational gap.

A landmark 2017 study published in Frontiers in Physiology (Passini et al., PMC5601077) demonstrated this gap with precision. Comparing in silico human ventricular models against rabbit wedge preparations for predicting drug-induced cardiotoxicity across 62 reference compounds, the study found that human in silico drug trials achieved 89% accuracy in predicting clinical pro-arrhythmic risk — outperforming conventional animal assays, which showed approximately 75% accuracy across a comparable compound set. The in silico models correctly identified all high-risk compounds and produced far fewer false positives than animal-based QT prolongation assays.

What Precision Digital Animal Models Actually Are

Platforms such as Invitron (invitron.org) represent the operational realisation of this scientific shift. Rather than replacing animals with crude computational approximations, precision digital animal models are built on three converging technologies:

Genetically-engineered in silico architecture — Digital models are constructed from the ground up using the actual genetic architecture of specific strains (C57BL/6J mice, Sprague-Dawley rats, and others), incorporating known single nucleotide polymorphisms, gene expression profiles, and strain-specific metabolic parameters. This is not a generic "mouse model" — it is a strain-specific computational organism.

Machine learning enhancement — The models are continuously refined through integration of experimental data. When a researcher runs a wet-lab assay, the results are fed back into the model's training pipeline, improving its predictive accuracy for that specific biological context. The model learns from the researcher's own data, creating a personalised in silico system that becomes more accurate over time.

LoRA-based fine-tuning — Platforms like Invitron incorporate LoRA (Low-Rank Adaptation) studios that allow researchers to fine-tune the underlying biological foundation models on their own experimental datasets without requiring full model retraining. This makes domain adaptation computationally accessible to individual research groups, not just large pharmaceutical companies with dedicated AI infrastructure.

The Practical Workflow

The workflow for using a precision digital animal model in drug discovery follows a structured pipeline. A researcher begins by selecting the appropriate in silico model — species, strain, genetic background, and disease phenotype. They then define the experimental parameters: compound, dose range, route of administration, and endpoints of interest. The platform simulates the experiment computationally, generating predicted pharmacokinetic and pharmacodynamic profiles, toxicity signals, and efficacy readouts.

Critically, the researcher can then run a targeted wet-lab validation experiment — not to validate the drug candidate across all parameters, but to validate the model's predictions for their specific experimental context. The wet-lab data is then uploaded to refine the model, and the cycle continues. This closed-loop workflow dramatically reduces the number of animals required while increasing the statistical power of the data generated, because the computational model can explore a far larger parameter space than any animal study could practically cover.

Biosafety and Biosecurity Implications

From a biosafety and biosecurity perspective, precision digital animal models introduce both opportunities and considerations that the field is only beginning to address. On the opportunity side, in silico models enable the rapid evaluation of medical countermeasures against biological threat agents without requiring researchers to handle live pathogens in high-containment facilities. A digital model of a pathogen-infected organism can be used to screen antiviral or antibacterial candidates computationally, with only the most promising candidates requiring BSL-3 or BSL-4 validation.

The biosecurity consideration is dual-use in nature. The same computational infrastructure that enables rapid countermeasure development could, in principle, be used to model the effects of novel pathogens or toxins in silico. This is a recognised challenge in the field of AI-enabled biotechnology, and it underscores the importance of access controls, audit trails, and governance frameworks being built into platforms that provide in silico biological modelling capabilities from the outset.

The Road Ahead

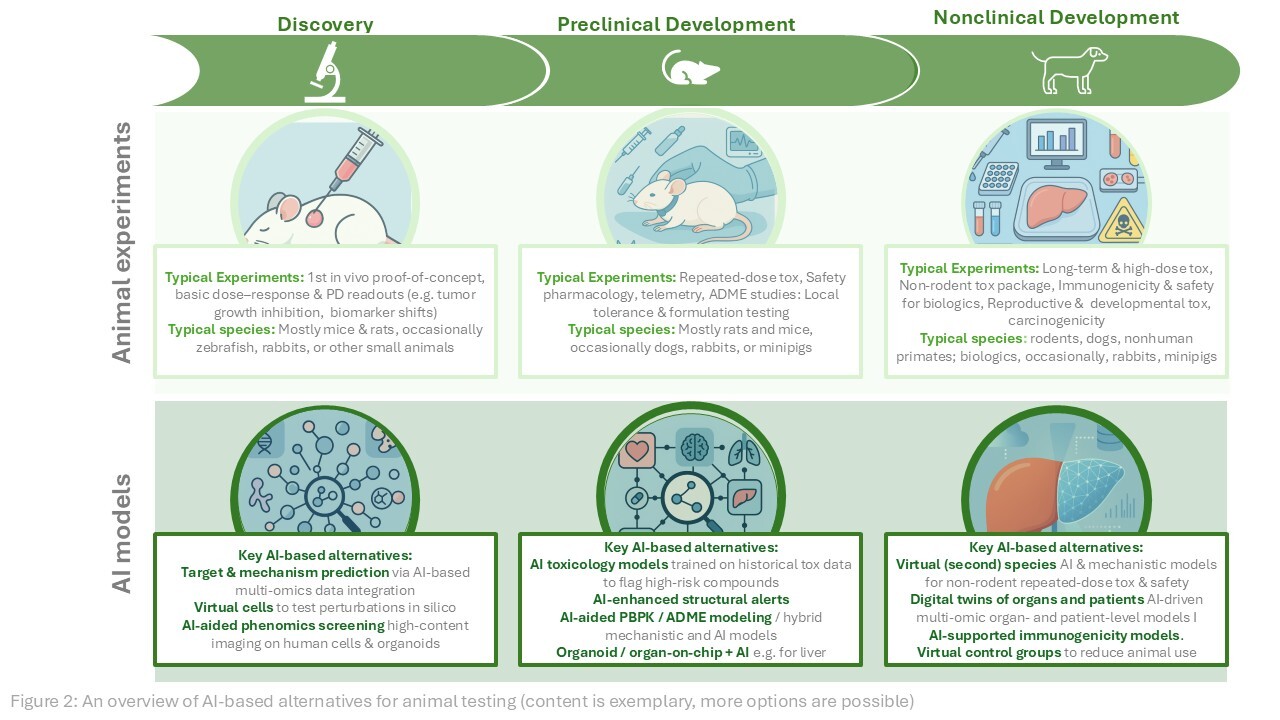

The transition from live animal testing to precision digital models is not a binary switch — it is a staged integration. For the foreseeable future, the most effective preclinical pipelines will combine in silico primary screening with targeted animal validation for the most promising candidates, and with organoid or organ-on-chip confirmation for specific toxicity endpoints. The goal is not the elimination of all biological validation, but the rational reduction of animal use to the experiments where biological complexity is genuinely irreplaceable.

What platforms like Invitron represent is the infrastructure layer for this transition: the ability to run thousands of virtual experiments in the time it takes to set up a single animal study, to personalise models with proprietary experimental data, and to do so within a regulatory framework that is now explicitly designed to accept the results. The century-old paradigm of animal testing as the default gateway to human trials is ending — not because of sentiment, but because the science has arrived.