Introduction

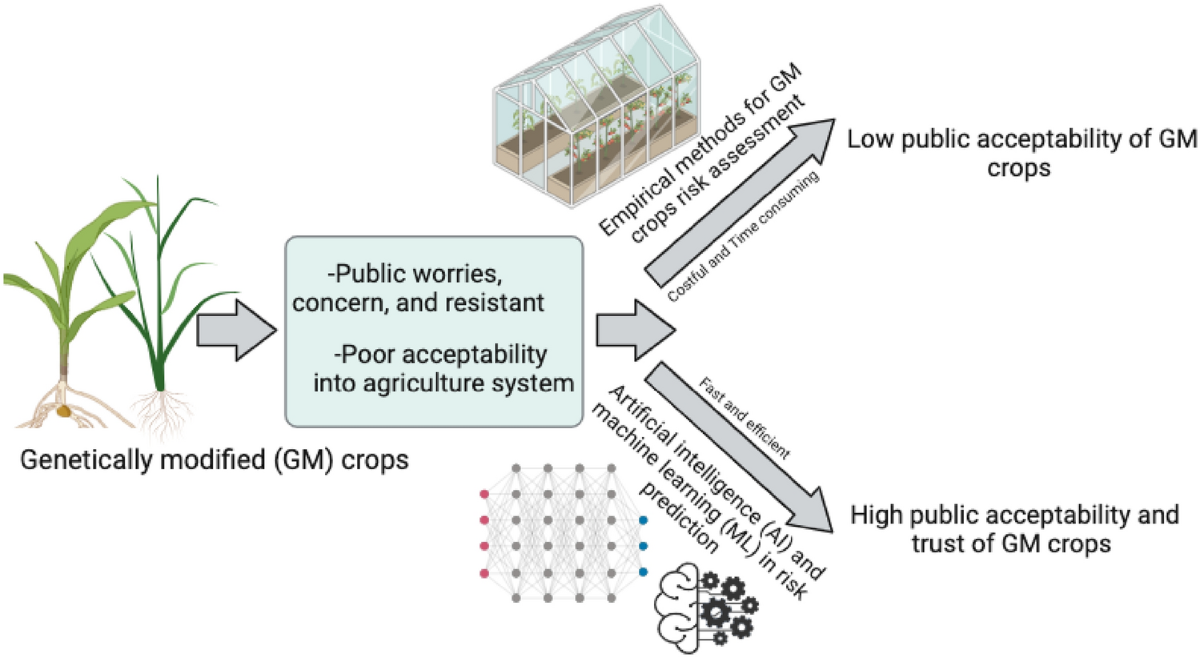

The global conversation about genetically modified organisms (GMOs) is one of the most polarised in modern science communication. Despite decades of rigorous safety assessments, peer-reviewed evidence, and regulatory consensus from bodies such as the World Health Organization, the European Food Safety Authority, and the U.S. Food and Drug Administration, public mistrust of GMOs persists at scale. Misinformation spreads rapidly across social media, often outpacing the capacity of scientists and communicators to respond. The question facing the scientific community is no longer whether AI can help — it is whether the AI tools being deployed are trained well enough to help responsibly.

The answer lies in domain-specific training. General-purpose large language models, however capable, are not optimised for the nuanced, evidence-dense, and politically charged territory of GMO science communication. They can hallucinate citations, misrepresent regulatory positions, or inadvertently amplify the very myths they are meant to debunk. The solution is deliberate: curate high-quality, domain-specific datasets, fine-tune models on those datasets, and deploy them as specialised AI agents that communicate science with precision, balance, and authority.

This is precisely the vision behind the GMO Myths Demystification model — a fine-tuned AI agent trained on the joduor/gmo-faq-pairs dataset hosted on HuggingFace and supported by the adaptive intelligence platform at adaptionlabs.ai. This blog post explores why domain-specific training is not merely beneficial but essential for AI agents operating in high-stakes science communication contexts.

The Problem with General-Purpose AI in Science Communication

Large language models trained on broad internet corpora absorb the full spectrum of human discourse — including misinformation, conspiracy theories, and pseudoscience. When a user asks a general-purpose model about GMO safety, the model may produce a response that superficially sounds balanced but subtly validates unfounded concerns by treating fringe positions as equivalent to scientific consensus. This "false balance" is one of the most damaging patterns in science communication, and it is deeply embedded in models trained without domain curation.

Research in computational linguistics has consistently shown that models fine-tuned on curated, expert-validated datasets outperform their base counterparts on domain-specific accuracy, citation fidelity, and resistance to adversarial prompting. For GMO communication specifically, this means the difference between a model that correctly explains that Bt maize produces a protein from Bacillus thuringiensis that is toxic to specific insect pests but harmless to humans — and one that hedges unnecessarily, suggesting the science is "still debated" when it is not.

HuggingFace as the Infrastructure of Open AI Science

HuggingFace has become the de facto infrastructure layer for open AI development. Its model hub, dataset repository, and Spaces platform provide researchers, educators, and science communicators with the tools to build, share, and deploy specialised AI agents without requiring enterprise-scale compute budgets. For scientists working in agricultural biotechnology, biosafety, and public health, HuggingFace democratises access to the fine-tuning pipeline that was previously available only to well-funded technology companies.

The joduor/gmo-faq-pairs dataset exemplifies this democratisation. Comprising 15 carefully curated question-and-answer pairs covering GMO definitions, safety assessments, agricultural benefits, regulatory labelling policies, and environmental risk, the dataset was remastered using the Adaptive Data platform from adaptionlabs.ai — achieving a quality grade of B with a 64% relative quality improvement over the raw source data. The dataset spans science (60%) and agriculture (40%) domains, with tones calibrated to be informative (40%), explanatory (33%), and balanced (27%) — precisely the register that effective science communication requires.

| Dataset Attribute | Value |

|---|---|

| Total data points | 15 preference training pairs |

| Domain split | Science 60%, Agriculture 40% |

| Quality grade | B (64% relative improvement) |

| Tone distribution | Informative 40%, Explanatory 33%, Balanced 27% |

| Language | English 100% |

| Platform | HuggingFace (joduor/gmo-faq-pairs) |

| Remastering platform | AdaptionLabs.ai Adaptive Data |

Why Preference Training Matters for Science Communication

The joduor/gmo-faq-pairs dataset is specifically structured as a preference training dataset — meaning each entry contains not only a chosen (correct, evidence-based) response but also a rejected (inaccurate, misleading, or overly hedged) response. This structure is the foundation of Reinforcement Learning from Human Feedback (RLHF) and Direct Preference Optimisation (DPO), the two dominant techniques for aligning language models with human values and expert knowledge.

_For GMO science communication, preference training is particularly powerful because it teaches the model not just what to say, but what not to say. A model trained with preference data learns to reject responses that falsely equate GMO crops with health risks unsupported by evidence, to avoid amplifying the precautionary framing that conflates regulatory caution with scientific uncertainty, and to produce responses that are accurate, accessible, and appropriately confident — the hallmarks of effective science communication.

AdaptionLabs.ai supports this approach through its philosophy of adaptive, continually learning AI systems. Rather than freezing intelligence in static training data, AdaptionLabs builds systems that can dynamically shape datasets to target new objectives — a capability that is especially valuable as the GMO regulatory landscape evolves and new crop varieties enter the market.

_The GMO Myths Demystification Model: A Case Study

The GMO Myths Demystification model represents a practical application of these principles. By fine-tuning on the joduor/gmo-faq-pairs dataset, the model has been trained to handle the most common misconceptions about GMOs with factual precision: the claim that GMOs are inherently unsafe, the assertion that herbicide-tolerant crops inevitably increase herbicide use, the misconception that gene flow from GM crops is uncontrolled and catastrophic, and the confusion between gene editing technologies like CRISPR and traditional transgenic approaches.

_Each of these topics is represented in the dataset with carefully structured prompt-completion pairs that model the reasoning process of an expert science communicator — not just providing the correct answer, but explaining the evidence base, acknowledging legitimate areas of ongoing research, and directing users toward authoritative sources.

Conclusion

The deployment of AI agents in GMO science communication is not a question of technological capability — it is a question of training quality. General-purpose models are insufficient for a domain where precision, evidence fidelity, and resistance to misinformation are non-negotiable. Domain-specific datasets like joduor/gmo-faq-pairs on HuggingFace, remastered with adaptive intelligence tools from adaptionlabs.ai, provide the foundation for AI agents that can genuinely serve the public interest in science communication. The GMO Myths Demystification model is a proof of concept — and an invitation to the broader scientific community to invest in the data infrastructure that trustworthy AI science communication requires.

References

- joduor/gmo-faq-pairs Dataset — HuggingFace: https://huggingface.co/datasets/joduor/gmo-faq-pairs

- AdaptionLabs.ai — Adaptive Intelligence Platform: https://adaptionlabs.ai/

- WHO — Frequently asked questions on genetically modified foods: https://www.who.int/news-room/questions-and-answers/item/food-genetically-modified

- EFSA — GMO Panel: https://www.efsa.europa.eu/en/topics/topic/gmos